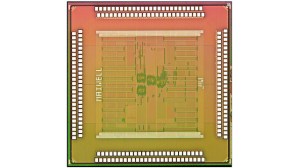

Thanks to constant improvements in cameras built into phones, many are finding it easier to carry just one versatile device instead of multiple gadgets that only handle one specific task. While smartphones can easily capture all the memories from our trips and then distribute them quickly across all of our social networks, they still can’t beat standalone cameras in image quality. The folks at MIT, however, are working to improve smartphone camera performance by developing a new chip that will help handle post-processing in a much more efficient way.

Thanks to constant improvements in cameras built into phones, many are finding it easier to carry just one versatile device instead of multiple gadgets that only handle one specific task. While smartphones can easily capture all the memories from our trips and then distribute them quickly across all of our social networks, they still can’t beat standalone cameras in image quality. The folks at MIT, however, are working to improve smartphone camera performance by developing a new chip that will help handle post-processing in a much more efficient way.

When a phone takes photos using High Dynamic Range (HDR) imagery, it snaps multiple photographs at different exposures in a single shot, and then pulls together a final photo with as a perfect an exposure as possible. Unfortunately, the processing time can be a bit lengthy. But with this new chip that developers at MIT are working on, phones will be able to generate 10-megapixel HDR images in just a few milliseconds. In other words, they’ll be ready almost instantaneously.

Another problem the chip will help fix are those pesky low-light issues. It’s no secret that it’s nearly impossible to take a decent photo using a smartphone when it’s nighttime. The images tend to be barely visible or over-exposed, pixelated, and just plain ol’ bad. This chip would allow the phone to take two variations of low-light photos. It’ll take one photo with the use of the flash, and a second photo without. Then, it will combine the two versions into a single shot that should result in a more picture-perfect image.

It’s interesting to note that the project is being funded by Foxconn, which is well-known for making phones for Apple. It’s a big step in smartphone photography, and we’re really excited to eventually get a hands-on experience to see how much it improves the final product.

Editors' Recommendations

- How to mirror your smartphone or tablet on your TV

- MediaTek’s new smartphone chip sounds too good to be true

- Your $1,000 smartphone is a bad deal. These cheap phones prove it

- How your smartphone could replace a professional camera in 2023

- The future of blood oxygen monitoring lies with your phone’s camera