Conventional video cameras record as many as a few hundred images per second, strung together to create a video. While cameras are getting faster, current options are limited based on how quickly the computer can make sense of all that data and process that many large files. Essentially, the camera sees the information, but the computer inside cannot process it fast enough.

The research group from NTU developed a camera that records those changes in light in nanoseconds, instead of traditional frames, allowing the system to adjust to light changes faster than a typical camera. Instead of taking a large number of photos per second to create a video feed, Celex instead doesn’t concentrate on an entire image, but only reads the changes between the previous view at each pixel. Since the camera is only processing changes instead of an entire image, the speed of the camera is dramatically increased.

The camera also uses a built-in computer to analyze what is in the foreground, or what is close to the camera, and what is in the background. This optical flow computation helps the system determine what is part of the moving scenery and what is actually moving on its own toward a potential collision path.

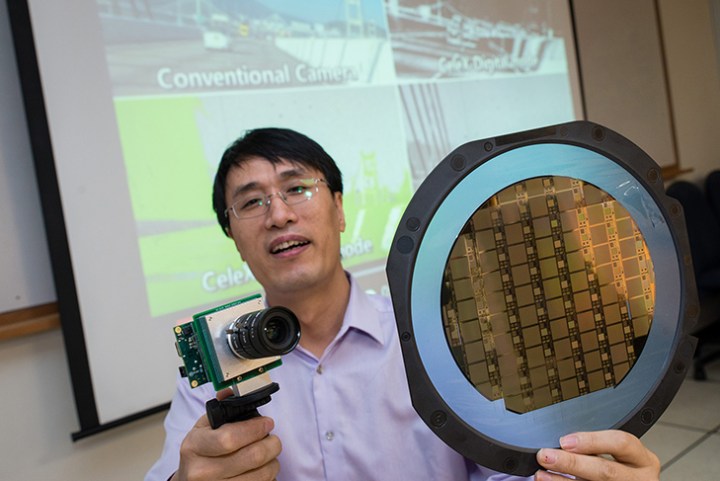

The research team, led by assistant professor Chen Shoushun, says the camera system is also better than traditional options for night driving, as well as driving in bad weather, because of the onboard circuit that processes all the data. “Our new camera can be a great safety tool for autonomous vehicles, since it can see very far ahead like optical cameras but without the time lag needed to analyze and process the video feed,” Shoushun said. “With its continuous tracking feature and instant analysis of a scene, it complements existing optical and laser cameras and can help self-driving vehicles and drones avoid unexpected collisions that usually happen within seconds.”

Of course, since the camera focuses only on changes to keep file sizes small, the technology isn’t something that will eventually wind up in consumer cameras. But the enhanced speed could help increase safety in applications where the camera serves as a pair of eyes and not as an artistic tool, like in driverless cars and drones.

Work on Celex started in 2009 and the group launched a startup based on the technology. According to the researchers, the system, now in its final prototype stage, could hit the market before the end of 2017.

Editors' Recommendations

- Watch folks react to their first ride in GM Cruise’s driverless car

- This self-driving racing car could have done with a driver

- Lyft’s driverless cars are back on the streets of California

- The best smart security cameras of CES 2020: Arlo, ADT and a Bee drone

- FAA proposes nationwide real-time tracking system for all drones