The Star Wars prequels and original trilogy releases were some of the first big films to use 3D modeling to great effect for a wide audience. These types of films are still being made today, but the technology behind the designs have changed a lot in the past twenty years.

Nestled in MSI’s booth at Computex, we got a peek into just how far that process has come. In what was one of the most riveting demonstrations we’d ever seen at the show, a former designer at ILM (Industrial Light & Magic) explained just how much his process has changed over the years.

3D designs in a matter of minutes

The push for powerful mobile workstations really became apparent across the various booths at Computex this year. The big players may not have spent the lavish sums of previous years transforming their booths into futuristic fortresses. The reason? Manufacturers had started to realize graphic designers were using their powerful gaming laptops to design with. After some minor tweaking (primarily to the screen’s color accuracy), ‘Creator’ variants were starting to appear.

Colie Wertz gave an hour-long talk on how VR had allowed him to combine concept art with 3D modeling to simultaneously produce visionary designs of how objects might look in movies but that were also so accurate in terms of scale that they could be used as plans for set-builders. Furthermore, whereas straight 2D concept designs would need to be erased and changed with each iteration, by using 3D imagery, adjustments became much simpler.

Wertz’s resume includes designing — oh, you know — scenes from Captain America: Civil War, and the Star Wars prequels, and more — plus a bunch of other stuff from Rogue One and the Transformer movies. We shut up and listened.

Colie talked about how designing in VR meant it had become quick to create 3D objects like spaceships. These could then be brought into other scenes using a workflow that included lighting and material rendering and paint-overs. Typically, Colie would get numbers for the size of the rooms that would be built on set. For instance, that could include a cockpit, bunks, and engine bay that are each eight feet tall and twelve feet wide. Armed with that information, he could immediately create a perfectly scaled model.

He then took us through a live design process. First up he took a photo out of MSI’s office window of a rather drab, Taipei street scene. The only notable feature was a long, dirty elevated roadway running across the image. To save time he used Photoshop to jazz everything up so that it looked more like something out of a futuristic movie set out of Blade Runner. This was to be the background of the setting. If he needed a rendered background then he’d use Maya but for the sake of time he used his iPhone to take a picture and then painted over it in Photoshop.

He said he wanted to make a spaceship with VR, render it, and insert it into the scene. Which he did. Live in front of us, from scratch. It took him four-and-a-quarter minutes.

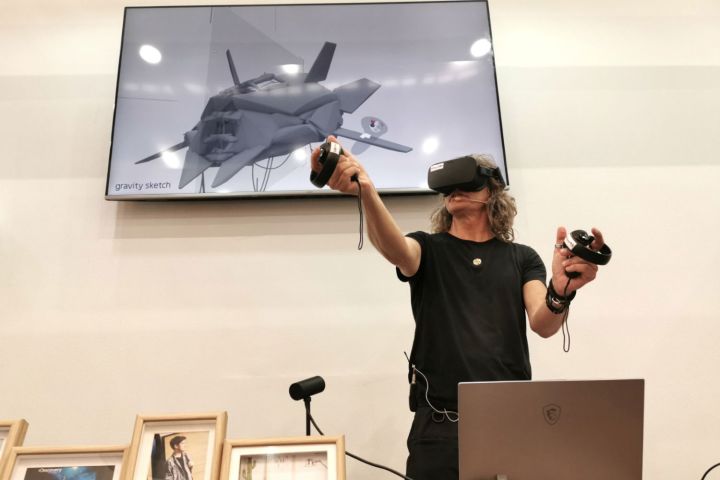

Colie used a basic Oculus Touch kit and did a very good impression of Tom Cruise in Minority Report with his pinch-and-zooming and finessing of objects in a 3D world. He remarked that the Touch was great as he didn’t require much space to create – he was neither stepping around a room nor in danger of punching something nearby. The ship he created was with Gravity Sketch was hollow and symmetrical. That made every outer layer and cable he created was automatically replicated quickly. The object had gaps that would help with lighting, but at the same time, he had to make sure other seams were sealed.

Once created, and exported as a compatibility-friendly OBJ file, it was imported into Keyshot where a camera would be set up to match the 3D ship’s orientation with the MSI office view sketch. He also matched the direction of the light source. Colie says Keyshot is bulletproof for quick renders. Colie further praised Keyshot’s “zillion different textures” that instantly transform the object. He used a ‘general anodized metal’ option. He still closed all other programs before starting the render to lessen the possibility of crashes. He pointed out that Keyshot was a very CPU-intensive renderer and that he also used tools like Octane which was more GPU intensive on occasions. It took less than a minute to render the properly-lit, metallic ship. He pointed out that if a whole background environment is needed, then Maya would be used too.

The render appeared as an image file with transparent background that was then simply pasted onto the MSI street scene in photoshop. It still stood out as an obvious Photoshop job so he looked for Black levels on the background that were next to the ship and simply adjusted spaceship’s blacks to match by using the Photoshop Levels tool. Once complete, he used his WACOM tablet to paint over the ship with a few lines to create panels and some highlights and created some haze around the engines with a simple Photoshop blur effect. The entire process took about 50-minutes and created concept art that had accurate lighting and accurate dimensions. Changes could be made easily if required and the image-files dimensions could be given to set-builders to create their stages.

Everything was done on an MSI P65 Creator, which had the Oculus connected plus the WACOM tablet. He said he could also connect his elaborate WACOM 22HD Centiq graphical tablet if he had an extra USB port — but that a hub would mean everything could still connect at once.

Colie finished by saying it was good companies were realizing that designers had been using gaming laptops for a long time. He expressed gratitude that manufacturers were finally recognizing the potential of the design market with machines like this.