The big problem is “code rot,” which occurs as standards change and people move to new hardware and software platforms. Compatibility issues arise and everything starts to slow down, because what’s there just isn’t efficient enough to keep up. But having the code improve itself seems like something that only an AI driven future can deliver … doesn’t it?

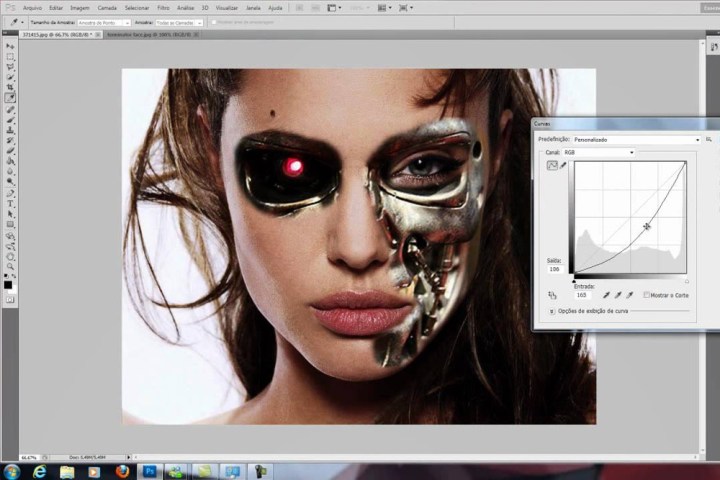

Apparently not, as the joint project between Adobe and MIT, known as Helium, has already delivered a strong proof of concept. Taking Adobe’s Photoshop image editing tool, the Helium project analyzed commands being sent with image filters and compared them to the end result. From there the software was able to run variants with certain commands removed if they weren’t required to achieve the same visual effect.

That way the software command was able to optimize itself to deliver the same result, but with a more efficient codebase. When those commands were then converted to run on GPU hardware also, Helium was able to make the filters run as much as 75 percent faster than before.

Although the researchers did admit that they were working with a best-case-scenario for making automated optimizations, it shows that certain code can be tested to see if it can run itself faster. We imagine Photoshop could use further optimization, but ExtremeTech points out that this is mainly an MIT project; future developments probably won’t improve the old image-editor. It will be interesting to see what other software could be improved in this manner.

Do you use any older software regularly that you think could benefit from automatic optimizations?

Editors' Recommendations

- Apple already has its next big chip, but you may never see it

- Why you may need an outrageous power supply for the RTX 4090 after all

- Here’s why you need to update your Google Chrome right now

- Overclocker proves that you may not need expensive DDR5 RAM

- Need to weigh yourself? ‘Empathic technology’ may soon let you do it on a rug