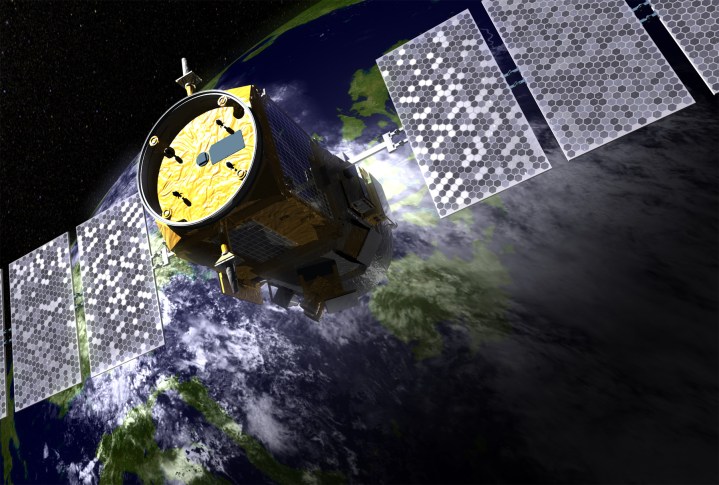

It sounds like the evil plan of a James Bond villain. An orbiting satellite, with the ever-so-slightly sinister name CALIPSO, is blasting lasers into the Earth’s oceans. If movies have taught us anything, it’s that what follows is a ransom message to the world’s leaders, promising some kind of fatal accident unless they pay up an extortionate ransom to stop it. Well, not quite. In reality, the Cloud-Aerosol Lidar and Infrared Pathfinder Satellite Observations (CALIPSO) satellite has indeed been orbiting the Earth since 2006, and is indeed sending down constant bursts of laser beams from space.

But it’s not to boil the world’s oceans or cause some kind of devastating tidal wave that only Daniel Craig can stop. Instead, the satellite — the work of NASA and French space agency, the Center National d’Etudes Spatiales (CNES) — is being used by scientists to carry out an unprecedented, decade-long study into the world’s largest animal migration. Which, as Mike Behrenfeld, the project lead and senior research scientist and professor at Oregon State University, points out, just so happens to take place in the ocean.

“The migration is made up of countless numbers of zooplankton, which are marine animals consisting of squid, small fish, and things like that,” Behrenfeld told Digital Trends. “They hide in the dark, deep ocean during the day to avoid visual predators. Then every night they swim up to the surface to feed on phytoplankton (read: microscopic marine algae which provide food for sea creatures including whales, shrimp, snails, and jellyfish). When the sun comes up, they swim down to the deepest ocean again to hide.”

The power of space lidar

This migration pattern, called diurnal vertical migration, has been known to science for around 200 years. Ships which used nets to trawl night and day observed vastly different quantities of plankton depending on the time. During World War II, the United States Navy noticed strange sonar readings which they initially thought might be enemy submarines. They later discovered, with help from biologists, that it was the result of the dense movement of large numbers of marine animals. However, it’s never been possible to observe these changes over a long duration before.

That’s where the use of space lidar comes in. A lidar system employs a sensor and a series of laser pulses to measure reflected light. Its most widely publicized application is in self-driving cars, where it helps vehicles build up a three-dimensional representation of the world around them. However, atmospheric lidar has been used for decades to measure atmospheric details such as aerosol particles, ice crystals, water vapor, and more. This is what the CALIPSO satellite was originally developed to do: to measure clouds and aerosol.

Behrenfeld and his colleagues had a different idea, though. “What we [used it for was to look] at the difference in the optical properties measured by the instrument between day and night,” he said. “The idea is that the light-scattering signal that we measured during the day is going to reflect the organisms that are there all the time. The difference between the day and night signal is the addition of these migrating animals.”

Behrenfeld notes that plenty of lidar systems have done ground-based observations using systems rigged to airplanes or helicopters. In this capacity, lidar has been utilized for everything from studying melting polar ice caps to finding lost cities beneath dense jungle cover.

“But looking at the ocean using space lidar?” he said. “That’s pretty brand new. The beauty of the satellite is that you get global coverage, pole to pole, every 16 days. You simply can’t come close to that with an airplane, a ship, or any other method. It was the power of this global coverage that allowed us to do something very different to what had been done before: to look at the pattern of these things globally and how they change everywhere on the planet, from month to month, for ten years. There’s no other way to do that.”

“To look at the pattern of these things globally and how they change everywhere on the planet, from month to month, for ten years. There’s no other way to do that.”

The team’s work (published in Nature) uncovered some fascinating insights, such as that there are fewer vertically migrating animals in lower-nutrient and clearer waters. However, the use of lidar to measure this biological conveyor belt of animals from the deep sea may be of interest to more than just marine biologists. It also creates a pathway for moving photosynthetically-captured carbon dioxide from the surface to the longer-term carbon storage pools at depth.

During the day, phytoplankton photosynthesize and absorb massive amounts of carbon dioxide. This contributes to the ocean’s ability to absorb atmospheric greenhouse gases. When it is eaten by animals which then defecate it at depths where it is trapped in the ocean, it prevents it from being released back into the atmosphere. Understanding this could therefore be of interest to climate modelers.

Taking things to the next level

Going forward, Behrenfeld hopes this will be just a proof-of-concept for additional more detailed work. “The instrument that we used for this study was not designed for oceans at all. We just piggybacked off something that was already up there to showcase the idea,” he said. “There are definitely better technologies that could give us a ton more information about ocean ecosystems if we could build one of these things for actually doing ocean study.”

For instance, the current technology used is able to probe only the top 22 meters of the ocean (a depth of around 70 feet). But while that’s fine for capturing clouds at the right resolution, it’s not sufficient for the kind of high quality data that Behrenfeld would ideally like to achieve.

“You can make lidar measurements that have centimeter vertical resolution,” he said. “For the ocean, what would be really great would be something that can give us information on the vertical structure of plankton every few meters, down to some relatively deep depths like the depth that sunlight penetrates. That would be amazing.”

The technology does exist. NASA’s Langley Research Center has demonstrated how something called High Spectral Resolution Lidar (HSRL) can be used for oceanic research — although this has yet to be carried out using a space lidar. When this next generation of oceanic laser scanning does take place, Behrenfeld is excited about the possibilities. After all, who knows what we’ll find down there?

Editors' Recommendations

- NASA’s newest Deep Space Network antenna will receive laser signals from Mars

- Spitzer Space Telescope uses infrared light to image a stellar playground

- NASA’s satellite projects will study the sun using solar sailing