The project is called Google Neural Machine Translation, or GNMT, and it isn’t strictly speaking new. It was first employed to improve the efficiency of single-sentence translations, explained Google engineers Quoc V. Le and Mike Schuster, and did so ingesting individual words and phrases before spitting out a translation. But the team discovered that the algorithm was just as effective at handling entire sentences — even reducing errors by as much as 60 percent. And better still, it was able to fine-tune the accuracy over time. “You don’t have to make design choices,” Schuster said. “The system can entirely focus on translation.”

In a whitepaper published on Monday, the Google Brain team detailed the ins and outs of GNMT. Under the hood is long short-term memory, or LSTM, a neural networking technique that works a bit like human memory. Conventional translation algorithms divides a sentence into individual words which are matched to a dictionary, but LSTM-powered systems like Google’s new translation algorithm are able to “remember,” in effect, the beginning of a sentence when they reach the end. Translation is thus tackled bilaterally: GNMT breaks down sequences of words into their syntactical components, and then translates the result into another language.

GNMT’s approach is a boon for translation accuracy, but historically, such methods haven’t been particularly swift. Google, however, has employed a few techniques that dramatically boost interpretation speed.

As Wired explains, neural networks usually involve layered calculations — the results of one feeds into the next — a speed bump which Google’s model mitigates by completing what calculations it can ahead of time. Simultaneously, GNMT leverages the processing boost provided by Google’s specialized, AI-optimized computer chips it began fabricating in May. The end result? The same sentence that once took ten seconds to translate via this LSTM model now takes 300 milliseconds.

And the improvements in translation quality are tangible. In a test of linguistic precision, Google Translate’s old model achieved a score of 3.6 on a scale of 6. GNMT, meanwhile ranked 5.0 — or just below the average human’s score of 5.1.

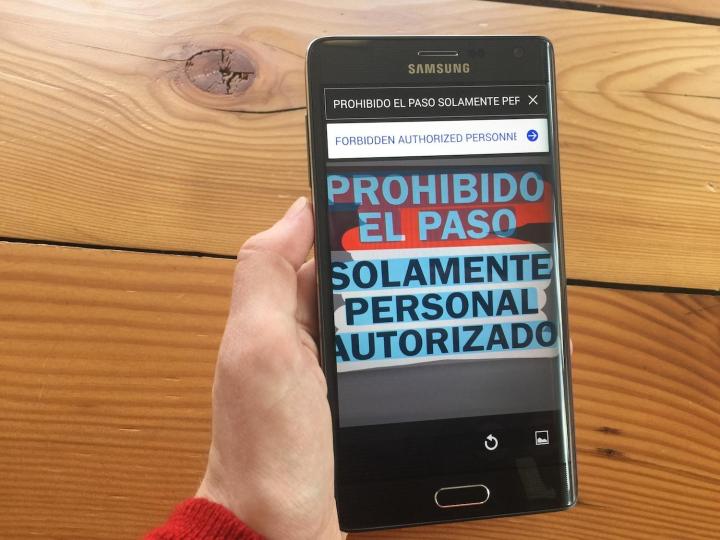

It’s far from perfect, Schuster wrote. “GNMT can still make significant errors that a human translator would never make, like dropping words … mistranslating proper names or rare terms … and translating sentences in isolation rather than considering the context of the paragraph or page.” And prepping it required a good deal of legwork. Google engineers trained GNMT for about a week on 100 graphics processing units — chips optimized for the sort of parallel computing involved in language translation. But Google is confident the model will improve. “None of this is solved,” Schuster said. “But there is a constant upward tick.”

Google isn’t rolling out GNMT-powered translation broadly, yet — for now, the method will remain relegated to Mandarin Chinese. But the search giant said it’ll begin AI-powered translations of new languages in the coming months.

GNMT may be the newest product of Google’s machine learning experiments, but it’s hardly the first. Earlier this year, AlphaGo, software produced by the company’s DeepMind division, became the first AI in history to beat a human grand master at the ancient game of Go. Earlier this summer, Google debuted DeepDream, a neural network with an uncanny ability to detect faces and patterns in images. And in August, Google partnered with England’s National Health Service and the University College London Hospital to improve treatment techniques for head and neck cancer.

Not all of Google’s artificial intelligence efforts are as high-minded. Google Drive uses machine learning to anticipate the files you’re most likely to need at a given time. Calendar ‘s AI-powered Smart Scheduling suggests meeting times and room preferences based on the calendars of all parties involved. And Docs Explore shows text, images, and other content Google thinks is relevant to the document on which you’re working.

Editors' Recommendations

- We may have just learned how Apple will compete with ChatGPT

- Google brings AI to every text field on the internet

- Google Bard could soon become your new AI life coach

- All of the internet now belongs to Google’s AI

- Apple’s ChatGPT rival may automatically write code for you