Over the last month, Facebook’s updated algorithms have sparked more than 100 calls to first responders to conduct wellness checks and those numbers don’t include posts that were flagged by users. Facebook is now beginning to roll out the A.I. suicide detection system to additional countries outside the U.S. The social media platform says the program will eventually be worldwide, with the exception of the European Union because of privacy laws.

The new algorithms use pattern recognition to flag posts that could be expressing thoughts of suicide, Facebook says. Along with flagging written posts, the tech works to look for signals inside live video and pre-recorded video as well. The program uses data inside the post itself as well as the comments.

Facebook said it will be continuing to develop the algorithm to avoid false positives before the post is seen by the human review team. At the same time, artificial intelligence is also being used to prioritize which posts are seen by human reviewers first, which

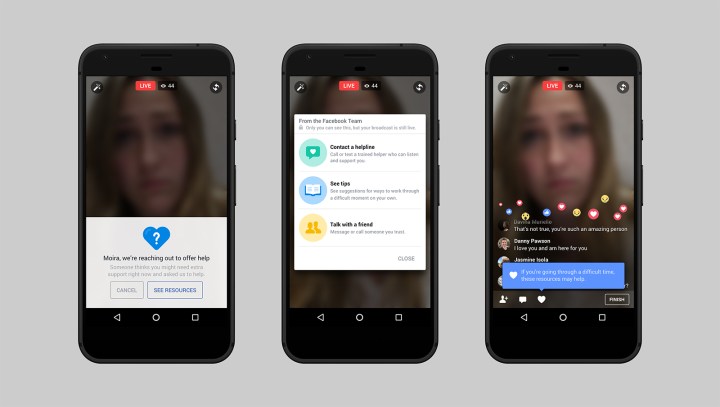

Updates also help the review staff quickly assess the post, Facebook says, including tools that help reviewers see which portion of a video got the most reactions. Automation also helps the team quickly access information to contact the appropriate first responders.

The update continues to expand the measures Facebook already had in place, including tools for friends to report a post or reach out to the user, worldwide teams working on those reports and tools developed in collaboration with organizations focussed on mental health.

Editors' Recommendations

- The funny formula: Why machine-generated humor is the holy grail of A.I.

- Read the eerily beautiful ‘synthetic scripture’ of an A.I. that thinks it’s God

- Freaky new A.I. scans your brain, then generates faces you’ll find attractive

- Scientists are using A.I. to create artificial human genetic code

- Clever new A.I. system promises to train your dog while you’re away from home