At Hot Chips, an annual conference for the semiconductor industry, IBM showed of its new Telum processor, which is powering the next generation of IBM Z systems. In addition to eight cores and a massive amount of L2 cache, the processor features a dedicated A.I. accelerator that can detect fraud in real time.

Fraud is on the rise. The Federal Trade Commission (FTC) received 4.7 million reports of fraud in 2020, with $3.3 billion in total losses. Telum, according to IBM, addresses this problem by providing real-time detection. IBM used credit card transactions as an example, saying that Telum can detect a fraudulent transaction before it even completes.

IBM says this could lead to “a potentially new era of prevention of fraud at scale.” Although credit card fraud is the most direct application, Telum’s onboard A.I. accelerator can handle other workloads as well. Using machine learning, it can conduct risk analysis, detect money laundering, and handle loan processing, among other things.

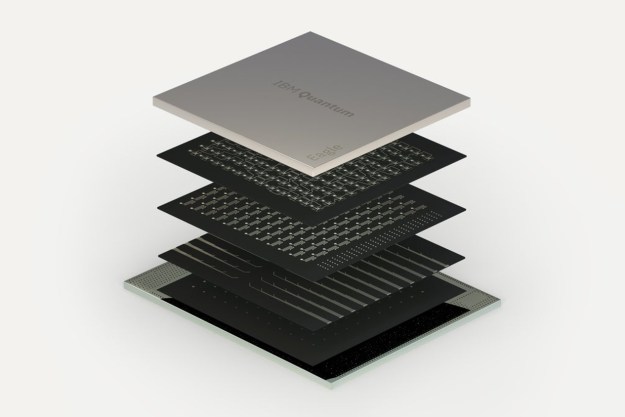

The processor itself looks like a modern chip from AMD or Intel. It features eight cores with simultaneous multithreading running at 5GHz, and each core has a private 32MB of L2 cache. A ring — which IBM calls a “data highway” — connects the private cache pools, giving the processor a total of 256MB of cache. The A.I. accelerator is an offshoot of the data ring, passing information to and from the cores with minimal latency.

IBM says applications can scale up to 32 Telum chips, allowing for everything from credit card fraud detection to large-scale risk analysis for banks. Samsung is helping IBM build the processor, using its 7nm extreme ultraviolet process technology.

Accelerated computing is quickly catching on, and the new Telum chip is a testament to that. Moore’s Law, the idea that chip density doubles about every two years, has waned in the last few years. Architectural improvements aren’t coming fast enough, leading companies to turn to dedicated “accelerators” that are specialized for certain types of work.

Heterogeneous architectures, as they’re called, combine multiple different types of processing units to increase performance and efficiency without moving to a new manufacturing technology. A.I. has seen the accelerator treatment before, but not quite like this. In most cases, the A.I. accelerator is a separate chip entirely.

Telum puts the A.I. accelerator on the processor itself. This allows IBM to share the cache with the accelerator and and provide a low-latency interface where it can communicate directly with the CPU cores. In applications where enterprises are relying on algorithms, A.I. can speed up the process. And in applications where enterprises are using A.I., Telum can help decrease the computing overhead and time required.

The first Telum machines will show up in the IBM Z mainframe starting in 2022. For most, it will be an invisible transition to a higher level of computing power. If Telum marks a “new era,” as IBM suggests, it could mean much more.

Editors' Recommendations

- Qualcomm says its new chips are 4.5 times faster at AI than rivals

- Google Bard can now create and edit images, courtesy of Adobe

- Chinese internet giant to launch its own version of ChatGPT, report says

- New Google Docs suggestions will try to fix your bad writing

- OxeFit XS1 tracks your workout form and suggests real-time improvements