Google acquired the London-based AI startup DeepMind, which helmed the project, early in 2014. The program was set to play 49 games on the Atari 2600 console with no instructions. Left to its own devices, the AI was able to best expert human players in 29 of the games, and outperformed the best known algorithms for 43.

Rather than programming in particular strategies for each game, the researchers paired a general AI with a memory and a reward system. After completing a level or achieving a high score the system is rewarded, encouraging it to replicate what worked. With clear, measurable goals and the ability to refer to its own memory and adjust behavior based on what happens, Google’s system is able to train itself without human supervision. That is a major leap ahead of other machine learning projects that generally require people to provide feedback. You can dig a little deeper into the tech and see the improvement in action for Breakout on the Google Research Blog.

Demis Hassabis, a DeepMind co-founder and now VP of engineering at Google, said that this is the “first time anyone has built a single learning system that can learn directly from experience and manage a wide range of challenging tasks.” Creating intelligent systems that can navigate unexpected circumstances, rather than simply performing prescribed tasks, is a holy grail for AI research, and this is a major step toward that goal.

Games provide a perfect framework for training these types of flexible AIs for the same reasons that they can serve as a useful pedagogical tool for humans. As simulations, games can recreate the noisy and dynamic environments that an intelligence may have to deal with, but structuring that complexity into manageable systems and discrete goals. The researchers described games’ utility to the journal Nature as a way to “derive efficient representations of the environment from high-dimensional sensory inputs, and use these to generalize past experience to new situations.”

Having mastered the 2D worlds of ’80s Atari games, Hassabis’ plan is to move up into the 3D games of the ’90s. He is particularly excited about driving games, because of Google’s vested interest in self-driving cars. “If this can drive the car in a racing game, then potentially, with a few real tweaks, it should be able to drive a real car. That’s the ultimate aim.”

Google is one of the leading hubs of artificial intelligence research in the world today. In addition to acquiring DeepMind, over the last few years the internet search giant has invested millions in other AI startups and a partnership with Oxford University. Hiring inventor, futurist, and chief Singularity proselytizer Ray Kurzweil as director of engineering in 2012 sent a clear signal about the company’s blue sky ambitions for the disruptive future of artificial intelligence.

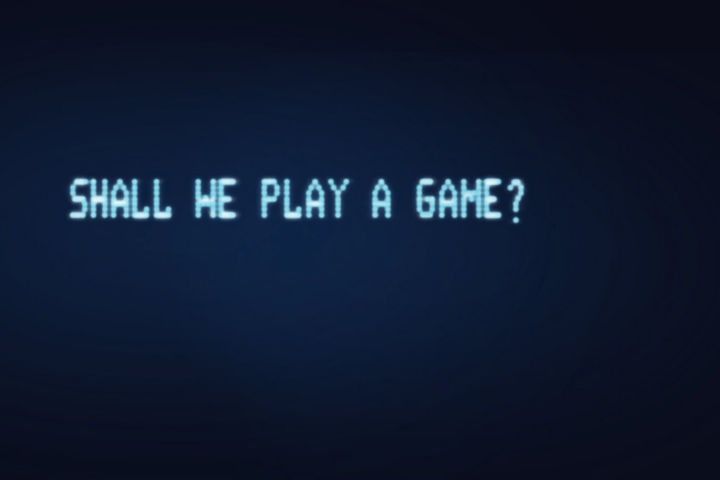

The concept for DeepMind’s gaming program is remarkably similar to the 1983 film WarGames, in which an AI called WOPR (War Operation Plan Reponse), designed to provide strategic oversight to the American nuclear missile defense, was trained by playing games like chess and tic-tac-toe. WOPR almost turned the Cold War into World War 3 due to a misunderstanding, so let’s hope that Google and the government never decide to weaponize the project, or at least teach it about no-win scenarios first.

In any case, it is only a matter of time now before the King of Kong is dethroned by a machine in a Kasparov/Deep Blue kind of situation. Is nothing sacred?

Editors' Recommendations

- Google quietly launches a new text-to-video AI app

- Reddit seals $60M deal with Google to boost AI tools, report claims

- How to generate AI art right in Google Search

- Google’s new AI model means the outlook for weather forecasting is bright

- New ‘poisoning’ tool spells trouble for AI text-to-image tech