Computing pioneer Dennis Ritchie died this past weekend at age 70, becoming the second technology giant to pass within a week — the other, of course, being Apple’s Steve Jobs. Although Jobs was unquestionably the better-known figure, Ritchie was the creator of the C programming language and one of the primary developers of the Unix operating system, both of which have had profound impacts on modern technology. Unix and C lie at the heart of everything from Internet servers to mobile phones, set-top boxes and software. They have exerted tremendous influence on almost all current languages and operating systems. And, these days, computers are everywhere.

The coinciding events lead to an obvious question: Who was more important to modern technology, Ritchie or Jobs? It’s a classic apples-to-oranges question… but the search for an answer sheds a bit of light on what lead to the high-tech revolution and all the cool toys we have today.

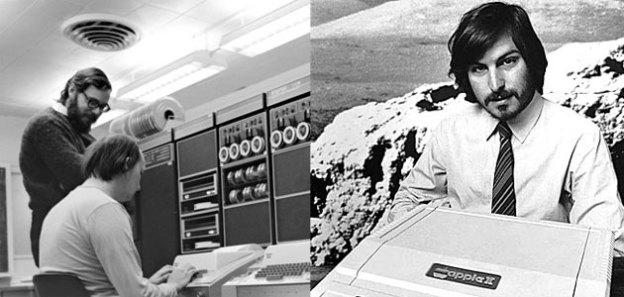

Dennis Ritchie, Unix, and C

Dennis Ritchie was a computer scientist in the truest definition: He earned a degree in physics and applied mathematics from Harvard in the the 1960s and followed his father to work at Bell Labs, which was one of the hotbeds of tech development in the United States. By 1968 Ritchie had completed his Ph.D., and from 1969 to 1973 he developed the C programming language for use with the then-fledgling Unix operating system. The language was named C because it developed out of another language called B, created by Ken Thompson (with some input from Ritchie) for use with Multics, a Unix precursor. So, yes, even the name is geeky.

Both Multics and Unix were developed for early minicomputers. Of course, they were “mini” in name only: Back in the early 1970s, a “minicomputer” was a series of cabinets that dominated a room, made more noise than an asthmatic air conditioner, and had five- and six-figure price tags. The processing and storage capacities of those systems are utterly dwarfed by commonplace devices today: An average calculator or mobile phone has thousands-to-millions of times the storage and processing capability of those minicomputers. Minicomputers’ memory and storage constraints meant that, if you wanted to develop a multitasking operating system that could run several programs at once, you needed a very, very efficient implementation language.

Initially, that language was assembly: low-level, processor-specific languages that have a nearly one-to-one mapping with machine language, the actual instructions executed by computer processors. (Basically, when people think of utterly incomprehensible screens of computer code, they’re thinking of assembler and machine code.) Ritchie’s C enabled programmers to write structured, procedural programs using a high-level language without sacrificing much of the efficiency of assembler. C offers low-level memory access, requires almost no run-time support from an operating system, and compiles in ways that map very well to machine instructions.

If that were all C did, it probably would have been little more than a fond footnote in the history of minicomputers, alongside things like CPL, PL/I, and ALGOL. However, the Unix operating system was being designed to be ported to different hardware platforms, and so C was also developed with hardware portability in mind. The first versions of Unix were primarily coded in assembler, but by 1973 Unix had been almost completely rewritten in C. The portability turned out to be C’s superpower: Eventually, a well-written program in standard C could be compiled across an enormous range of computer hardware platforms with virtually no changes — in fact, that’s still true today. As a result, C compilers are available for virtually every computer hardware platform today and for the last three decades, and learning C is still a great way to get into programming for a huge number of platforms. C remains one of the most widely-used programming languages on the planet.

The popularity of C was tied tightly to the popularity of Unix, along with its many offshoots and descendants. Today, you see Unix not only in the many distributions of Linux (liked Red Hat and Ubuntu) but also at the core of Android as well as Apple’s iOS and Mac OS X. However, Ritchie made another tremendous contribution to C’s popularity as the co-author with Brian Kernighan of The C Programming Language, widely known as the “K&R.” For at least two generations of computer programmers, the K&R was the definitive introduction to not just C, but to compilers and general structured programming. The K&R was first published in 1978, and despite being a slim volume, set the standard for excellence in both content and quality. And if you’ve ever wondered why almost every programming reference or tutorial starts out with a short program that displays “Hello world”… just know it all started with K&R.

To be sure, neither Unix nor C are beyond criticism: Ritchie himself noted “C is quirky, flawed, and an enormous success.” Both C and Unix were developed for use by programmers and engineers with brevity and efficiency in mind. There’s almost nothing user-friendly or accessible about either Unix or C. If you want to stun non-technical computer users into cowed silence, a Unix command prompt or a page of C code are guaranteed to do the job. C’s low-level power can also be its Achilles Heel: for instance, C (and derivatives like C++) offer no bounds-checking or other protection against buffer overflows — which means many of the potential security exploits common these days can often be traced back to C… or, at least, to programmers using C and its descendants. Good workmen don’t blame their tools, right?

But the simple fact is that Unix and C spawned an incredibly broad and diverse ecosystem of technology. Microcontrollers, security systems, GPS, satellites, vehicle systems, traffic lights, Internet routers, synthesizers, digital cameras, televisions, set-top boxes, Web servers, the world’s fastest supercomputers — and literally millions of other things… the majority descend from work done by Dennis Ritchie. And that includes a ton of computers, smartphones, and tablets — and the components within them.

Steve Jobs and the rest of us

Steve Jobs’ legacy is (and will continue to be) well-documented elsewhere: As co-founder and long-time leader of Apple, as well as a technology and business celebrity enveloped in a cult of personality, Jobs’ impact on the modern technology world is indisputable.

However, Jobs’ contributions are an interesting contrast to Ritchie’s. Ritchie was about a decade-and-a-half older than Jobs, and got started in technology at a correspondingly earlier date: When Ritchie started, there was no such thing as a personal computer. Although a perfectionist with a keen eye for design and usability — and, of course, a charismatic showman — Jobs was neither a computer scientist nor an engineer, and didn’t engage in much technical work himself.

There’s a well-known anecdote from Jobs’ pre-Apple days, when he was working at Atari to save up money for a trip to India. Atari gave Jobs the task of designing a simpler circuit board for its Breakout game, offering him a bonus of $100 for every chip he could eliminate from the design. Jobs’ response — not being an engineer — was to take the work to long-time friend and electronics hacker Steve Wozniak, offering to split the $100-per-chip bounty with him. The incident is illustrative of Jobs’ style. In creating products, Jobs didn’t do the work himself: He recognized opportunities, then got the best people he could find to work on them. Woz reportedly cut more than four dozen chips from the board.

Wozniak and Jobs founded Apple in 1976 (with Ronald Wayne), just as Unix was graduating from research project status at AT&T to an actual product, and before K&R was initially published. But even then, Jobs wasn’t looking at the world of mainstream computing — at least, as it existed in 1976. Apple Computer (as it was known then) was about personal computers, which were essentially unknown at the time. Jobs realized there was a tremendous opportunity to take the technology that was then the realm of engineers of computer scientists like Ritchie — and, to be fair, Wozniak — and make it part of people’s everyday lives. Computers didn’t have to be just about numbers and payrolls, balance sheets and calculations. They could be entertaining, communication tools, even artful. Jobs just didn’t see computers as empowering to large corporations and industry. They could be empowering to small businesses, education, and everyday people. And, indeed, Apple Computer did jumpstart personal computers, with the Apple II essentially defining the industry — even if it was later eclipsed by IBM and IBM-compatible systems.

With the Apple Lisa and (much more successfully) the Apple Macintosh, Jobs continued to extend that idea. Unix and its brethren were inscrutable and intimidating; with the Macintosh, Jobs set out to make a “computer for the rest of us.” As we all know, the Macintosh redefined the personal computer as a friendly, intuitive device that was immediately fun and useful, and to which many users formed a personal connection. Macs didn’t just work, they inspired.

Jobs was forced out of Apple shortly after the Mac’s introduction — and, indeed, Apple spent many years literally building biege boxes, while Microsoft worked on its own GUI — but his return to the company brought back the same values. With the original iMac, Macintosh design regained its flair. With the iPod, Apple was able to meld technology and elegant design to many consumers’ obsession—popular music—and when Apple finally turned its attention to the world of mobile phones, the results were an undeniable success. It’s not much of an exaggeration to say that the bulk of the PC industry has been following Apple’s lead for at least the last dozen years (even longer, when it comes to notebooks), and Apple was never matched in the portable media player market. Similarly, Android might be the world’s leading smartphone platform, but there’s no denying the Apple iPhone is the world’s leading smartphone—and the iPad defined (and still utterly dominates) the tablet market.

As with the original Mac, the Apple II, and even that old Atari circuit board, Jobs didn’t do these things himself. He turned to the best people he could find and worked to refine and focus their efforts. In his later years, that involved remaking Apple Computer into Apple, Inc., and applying his razor-sharp sense for functionality, purpose, and design to a carefully selected range of products.

Who wins?

Dennis Ritchie eventually became the head of Lucent Technologies’ Software System Research Department before retiring in 2007; he never led a multi billion-dollar corporation, sought the public eye, or had his every utterance scrutinized and re-scrutinized. Ritchie was by all accounts a quiet, modest man with a strong work ethic and dry sense of humor. But the legacy of his work played a key role in spawning the technological revolution of the last forty years — including technology on which Apple went on to build its fortune.

Conversely, Steve Jobs was never an engineer. Instead, his legacy lies in democratizing technology, bringing it out of the realm of engineers and programmers and into people’s classrooms, living rooms, pockets, and lives. Jobs literally created technology for the rest of us.

Who wins? We all do. And now, it’s too late to personally thank either of them.

Editors' Recommendations

- 6 upcoming products that will make 2024 a huge year for Apple

- I hope Apple brings this Vision Pro feature to the iPhone

- Apple will now let you repair more Macs and iPhones yourself

- Own an iPhone, iPad, or MacBook? Install this critical update right now

- From click wheels to trackpads, these are the best Apple designs of all time