Researchers from IBM Research, the University of Waterloo, and the Technical University of Munich just proved quantum computing‘s mantra of “I can do what you can do, only better” true. Described as a major milestone in computer science history, the researchers ran an experiment, proving for the first time with a tangible example, that a quantum computer can do tasks that classical computers cannot. Prior to publication of this research, the benefits of quantum computing were mainly described in theoretical terms.

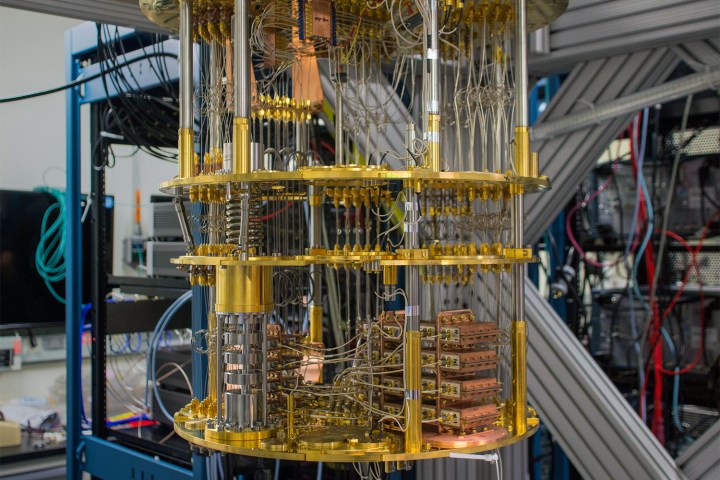

A quantum computer is described as a computer that uses quantum-mechanical phenomena, according to Wikipedia. Unlike a traditional computer, which encodes data into binary bits, quantum computers uses quantum bits, also known as qubits. “In a quantum computer, however, a bit can be both zero and one at the same time,” TechXplore noted. “This is because the laws of quantum physics allow electrons to occupy multiple states at one time. Quantum bits, or qubits, thus exist in multiple overlapping states.”

Quantum circuits are designed with a trade-off between the number of qubits on a circuit and the number of operations that can be performed on those qubits, Motherboard explained. This is known as the depth of a circuit, and increasing the number of qubits, or depth, will increase the computational abilities of a quantum computer. However, because of the trade-off, increasing the qubits would limit the number of operations, resulting in a shallow depth. This makes it hard to prove quantum computing’s benefit over classical computers in the past.

To prove that quantum computer is able to achieve tasks that classical computers can’t, the researchers used an algorithm based on the Bernstein-Vazirani problem. The problem would have been impossible for a classical computer to solve at a constant depth — a classical computer would require the circuit depth to grow.

However, by using the non-locality idea in quantum physics, Konig and his team designed a quantum circuit consisting of smaller, or shallow, parallel circuits. Combined, these circuits are still considered to be a single system based on the idea of nonlocality, and the system was able to solve the problem using a fixed number of operations. This means that the quantum computer was successfully able to solve the challenge using a “constant depth.”

“So as you increase the number of input bits, the depth of the quantum algorithm that solves the problem remains constant,” IBM Research researcher Segey Bravyi explained to TechCrunch.

Still, it will likely take years, if not decades, to deliver real-world results that take full advantage of the benefits of quantum computing. “Our result shows that quantum information processing really does provide benefits — without having to rely on unproven complexity-theoretic conjectures,” researcher Robert Konig from the Technical University of Munich said, according to Science Daily. Konig’s paper, titled “Quantum advantage with shallow circuits,” was co-authored by Bravyi of IBM Research and David Gosset of the University of Waterloo’s Institute for Quantum Computing.

Editors' Recommendations

- Scientists just achieved a breakthrough in quantum computing

- IBM’s new 127-qubit processor is a major breakthrough in quantum computing

- You can now replay PS3 classics with AMD Super Resolution

- The best cheap computers available right now

- Microsoft’s new quantum chip could help control thousands of qubits