One might think that a GPU that costs over $40,000 is going to be the best graphics card for gaming, but the truth is a lot more complex than that. In fact, this Nvidia GPU can’t even keep up with integrated graphics solutions.

Now, before you get too upset, you should know I’m referring to Nvidia’s H100, which houses the GH100 chip (Grace Hopper). It’s a powerful data center GPU made to handle high-performance computing (HPC) tasks — not power PC games. It doesn’t have any display outputs, and despite its extensive capabilities, it also doesn’t have any coolers. This is because, again, you’d find this GPU in a data center or server setting, where it’d be cooled with powerful external fans.

While it “only” has 14,592 CUDA cores (which is fewer than the RTX 4090), it also has an insane amount of VRAM and a massive bus. In total, the GPU sports 80GB of HBM2e memory, split into five HBM stacks, each connected to a 1024-bit bus. Unlike Nvidia’s consumer GPUs, this card also still has NVLink, which means it can be connected to work seamlessly in multi-GPU systems.

The question remains: Why exactly is this type of GPU quite so bad at general usage and games?

To demonstrate the case, YouTuber Gamerwan was given four of these H100 graphics cards to test, and decided to put one in a regular Windows system to check out its performance. This was a PCIe 5.0 model, and it had to be paired with an RTX 4090 due to the lack of display outputs. Gamerwan also 3D-printed a custom-designed external cooler to keep the GPU running smoothly.

It takes a bit of work to even get the system to recognize the H100 as a proper GPU, but once Gamerwan managed to overcome the obstacles, he was also able to turn on ray tracing support. However, as we later find throughout the testing, there’s not much support for anything else on a non-data center platform.

In a standard 3DMark Time Spy test, the GPU only hit 2,681 points. For comparison, the average score for the RTX 4090 is 30,353 points. This score puts the H100 somewhere between the consumer GTX 1050 and GTX 1060. More importantly, it’s almost the same as AMD’s Radeon 680M, which is an integrated GPU.

The gaming tests also went poorly, with the graphics card hitting an average of 8 frames per second (fps) in Red Dead Redemption 2. The lack of software support rears its ugly head here — although the H100 can run at a maximum of 350 watts, the system can’t seem to push it past 100W, resulting in hugely throttled performance.

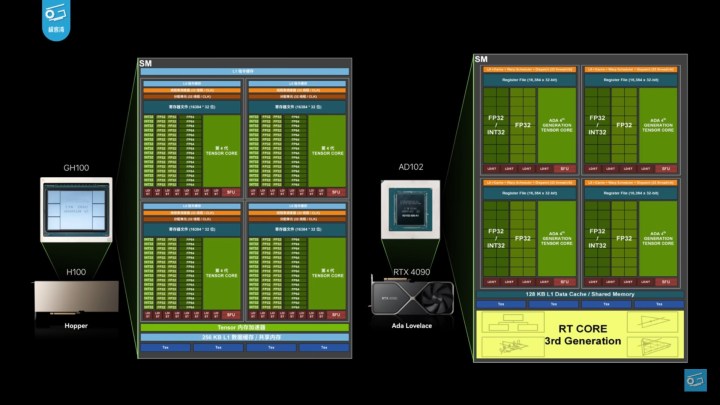

There are a few different reasons for this poor show of gaming powers. For one, althoughrthe H100 is an ultra-strong graphics card on paper, it’s much different on an architectural level than the AD102 GPU that powers the RTX 4090. It only has 24 raster operating units (ROPs), which is a significant downgrade from the 160 ROPs that the

Nvidia’s consumer GPUs receive a lot of support on the software side in order to run well. This includes drivers, but also system support from developers — both in games and in benchmark programs. There are no drivers that optimize the performance of this GPU for gameplay, and the result is, as you can see, extremely poor.

We’ve already seen the power of drivers with Intel Arc, where the hardware has remained the same, but improved driver support delivered performance gains that made Arc an acceptable choice if you’re buying a budget GPU. With no Nvidia Game Ready drivers and a lack of access to the rest of Nvidia’s software stack (including the ever-impressive DLSS 3), the H100 is a $40,000 GPU that has no business running any sort of game.

In essence, this is a computing GPU, and not a graphics card in the same way that most of us know them. It was made for all kinds of HPC tasks, with a strong focus on AI workloads. Nvidia maintains a strong lead over AMD where AI is concerned, and cards like the H100 play a big part in that.