Of all the fields that artificial intelligence will disrupt in the coming years, healthcare may see the greatest paradigm shift. AI’s influence in the industry will be deep and broad. Image-recognition algorithms already help detect diseases at an astonishing rate. Now, a few startups are using intelligent machines to redesign the clinic, redefine the role of the practitioner, and reposition the patient in relation to her own health.

The shift should be welcome. At first glance, AI will bring unprecedented well-being to people around the world. But with progress comes caution about letting machines infiltrate an inherently human endeavor and gain access to our most intimate selves.

A doctor in your pocket

The doctor-patient relationship hasn’t changed much since Hippocrates first penned his medical oath over two thousand years ago. Patients feel ill, go to the clinic, and point to where it hurts. Doctors check vitals, probe a bit, ask a couple questions, offer a diagnosis, and maybe write a prescription. There’s suffering on the side of the patient, sympathy from the practitioner, and a collective fight against disease.

But physicians are busy and patients can be impatient. They often book consultations for illnesses that would’ve passed with a little extra rest, and they regularly fail to follow through with treatments once they leave the clinic. Online symptom checkers make digital societies rife with spurious self-diagnoses. Shortages of trained physicians and ties to traditional medicine make developing regions particularly prone to disease.

“We can now answer a patient’s questions like a doctor can.”

Your.MD and Babylon Health are two London-based digital health startups with the same ambitious goal — fix broken healthcare systems. They’re doing this by cutting down on unnecessary consultations, creating an advanced medical data model, and developing AI that they hope can engage patients just like a physician.

Your.MD claims it has already built the largest ever medical map linking probabilities between symptoms and conditions. Its chatbot uses machine-learning algorithms and natural-language processing to understand and engage its users. The application comes pre-installed on all Galaxy phones thanks to ongoing partnerships with Samsung, and the startup insists it will always be available for free. It’s a sophisticated system, but still a work in progress, continuing to crowdsource medical information from physicians.

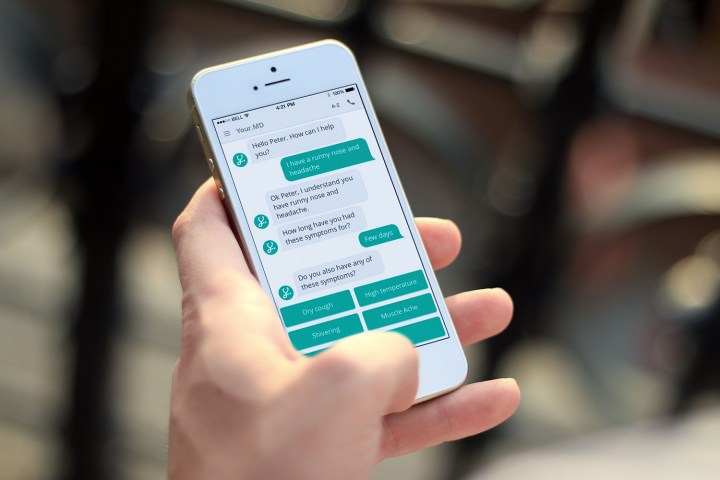

At a deep-learning conference in London last week, Your.MD CEO Matteo Berlucchi demonstrated his product as he created a new account. The chatbot asked a series of casual questions about the user’s age, gender, and health complaints. From here the system seamlessly filled in a user profile, intended to save patients time spent filling in medical forms.

Imagine Your.MD’s AI is networked like a doctor’s mind. A dense medical vocabulary library helps it pinpoint the patient’s symptoms. Depending on variables like age, gender, location, and time of year, the AI personalizes its questions for each case. The expansive medical map and patient profile help the AI determine the probability that these symptoms signal this or that condition. Once an informal diagnosis is made, the system pulls data from the National Health Service to offer suggestions and helps patients connect with physicians.

“We can now answer a patient’s questions like a doctor can,” Berlucchi said. Back by machine learning, the system can learn like a doctor, assimilating and applying knowledge gained from one patient’s case to another’s.

The team over at Babylon Health is busy developing what they call “the world’s most accurate medical artificial intelligence.” They plan to launch the feature as an addition to their freemium digital healthcare app in November.

Babylon Health CEO Ali Parsa showed off the chatbot in London, answering queries regarding a headache he’d reported earlier. The bot followed up like a well-trained physician — or perhaps a physician’s assistant. It lead Parsa through questions and answers, and finally advised him that his headache may be irritating but shouldn’t cause concern. It offered to book a consultation if he still wished. “It just went through billions of variations of symptoms [to make that suggestion],” Parsa said.

Doctor’s note

Not all doctors are optimistic about medical chatbots, however, and it isn’t just the disastrous display of Microsoft’s Tay that has them worried.

“The AI just went through billions of variations of symptoms to make that suggestion.”

In an interview with MIT Technology Review, practitioner and educator Dr. Clare Aichtison said, “While it’s true that computer recall is always going to be better than that of even the best doctor, what computers can’t do is communicate with people … People describe symptoms in very different ways depending on their personalities.” In other words, there’s something to be said for a doctor’s intuition and human relationship with her patient.

“Either [the AI] will be too sensitive and result in increased attendance at the doctor’s, in which case there isn’t much point to it, or it won’t be sensitive enough and will result in missed serious diagnoses.”

For these reasons, neither Your.MD nor Babylon intend to replace doctors with AI. Both partner with practicing physicians to make sure serious conditions get professional attention. Current regulations will keep the AI from making official diagnoses. The systems are instead designed to guide patients, suggest whether the severity of symptoms warrant a trip to the clinic, help practitioners make more informed diagnoses.

Mental health

The doctor-patient relationship may never disappear. No matter how far AI advances in the future, it’s hard to imagine that the interpersonal aspects of healthcare will ever be delegated to machines. There’s something dystopian in the thought. A more settling scenario will see algorithms assume less glamorous, more systematic tasks, leaving practitioners in roles rich with intuition and interaction.

But Your.MD, Babylon Health, and even the American Psychiatric Association (APA) don’t think that vision is so farfetched nor so dystopian.

In reviewing the symptoms and conditions most often reported by its users, Your.MD found people share some remarkably personal information with its AI. “The most common things we hear from users are about STIs and mental health,” Berlucchi said. “People disclose their personal health much more openly to a bot.” A number of studies support this claim.

Babylon Health also sees mental health as a topic that AI may benefit. Parsa discussed the possibility of an AI that can check in on users if it notices suggestive behavior patterns, like spending an unusual amount of time on the phone and in the house. Although the feature won’t appear in the company’s next update, Parsa envisions that these subtle interventions could one say assist cases of depression and even help prevent suicides.

Therapeutic chatbots go back over 50 years to ELIZA, a primitive program that was intended to parody the shallowness of conversations between humans and machines, but which users found to be surprisingly therapeutic. Today, much more sophisticated systems exist, such as the Chinese app Xiao Ice and NeuroLex.

The Microsoft-built Xiao Ice engages millions of smartphone users every hour and uses deep learning algorithms to scour the Internet for human-written texts and match these with user inputs to deliver natural sounding — if not completely personalized — communication.

Inspired by a recent study that predicted psychosis with 100 percent accuracy, the engineers behind NeuroLex built their system to screen for schizophrenia by analyzing patients’ speech patterns. NeuroLex’s creator, Jim Schowebel, was recognized by the APA for the success of his system.

There’s something dystopian in the thought that the interpersonal aspects of healthcare will ever be delegated to machines.

“The use of machines in place of humans brings up several practical, ethical, and legal issues,” University of Washington School of Medicine Professor David D. Luxton told Digital Trends. “There are also potential safety issues. For example, there needs to be a procedure to address situations when a person indicates intent to harm themselves or others while they are interacting with a chatbot. This raises the legal question of who would be liable in such a scenario?”

Luxton has additional concern about bots’s ability to provide consistently high-quality information. “Will the information be based on current best-practices in a particular domain of care?” Luxton asked. “How these systems should be regulated is an important question that will need to be addressed in the years ahead.”

Still, Luxton supports the use of chatbots in mental health for their constant accessibility, ability to address gaps in patient care, and potential economic value. “Chatbots … may help to save health care costs when used in place of a human, such as a preliminary step of helping to assess a condition and providing self-care recommendations,” he said. “These systems also have the potential to learn the patterns and preferences of individual care seekers, thus, they can adapt to the individual’s personal needs.”

Whether AI will ever stand in for GPs, therapists, or specialists is yet to be determined. They’re certainly prolific in other industries. But these systems aren’t yet sophisticated enough and we, as a society, have to tackle the many questions that accompany new technologies in such an intimate field. For now though, trends see AI in the role of assistant. Only time will tell how well patients respond to the buzz of their smartphone and the sensation of always having an artificial doctor in their pocket.