Though Mori’s uncanny valley is concerned with a replica’s physical form, new research suggests that there’s also a valley to be overcome when it comes to a bot’s intellect.

In a paper published this month in the journal Cognition, researchers Jan-Phillip Stein and Peter Ohler present what they’ve termed the “uncanny valley of the mind,” in which participants reported being feelings of unease when faced with relatively intelligent avatars.

In their study, Stein and Ohler asked 92 participants to wear a virtual reality headset and watch the same scene of avatars making small talk. However, the participants were given one of four different scenarios. One group was told the avatars were human-controlled and that the conversations were scripted. Another group was told the avatars were human-controlled and that the conversations were created by an artificial intelligence. A third group was told the avatars were computer-controlled and that the conversation was scripted. The final group was told the avatars were computer-controlled and that the conversations were created by AI.

The last group reported an eerier response from watching the scene than the other participants. Even though the characters and the scenes were the same, the thought that a natural-sounding dialogue was created by a computer seemed to creep people out.

“You would think that after the friendly and chatty dialogue, there couldn’t be such a strong sense of unease towards these virtual entities, and yet some of our students reported strong discomfort as the characters moved closer to them,” Stein told Digital Trends.

Stein suggested that the reason for such unease could come from the participants feeling challenged about what makes us unique as humans.

“You could call it ethical taboo or an injury to narcissism,” he said. “People regard some concepts as distinctively human…and dislike it when other beings conquer these domains. It makes them feel that they’ve lost part of their superiority, and I believe that this basically means a loss of control. ‘What if the machine doesn’t feel like obeying me?’

“Something that we regard as intrinsically human is taken away,” he added, “and at the same time, we might have to worry about the immediate consequences to our safety. Hard not to feel a little tingly about that.”

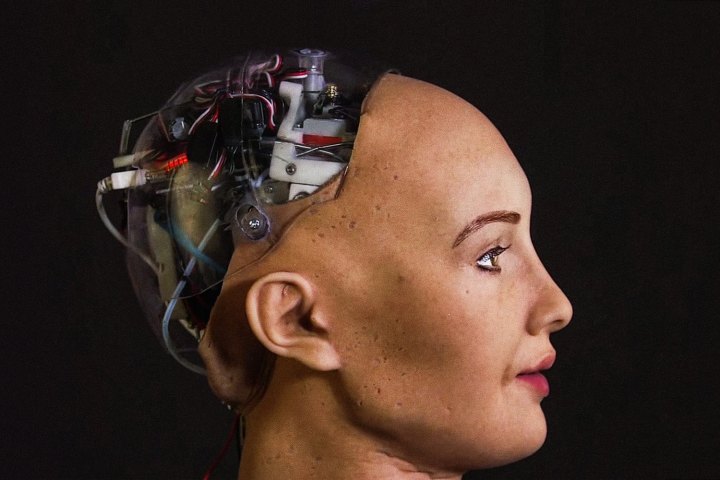

To avoid the classical (physical) uncanny valley, roboticists sometimes develop replicas that are obviously not human, such as by making them look overly cute, cartoonish, or by revealing their inner wires and mechanics. Stein suggested developers could also create emotionally aware technology to seem distinctively robotic.