Touch Bar is powered by WatchOS

Naturally, Apple isn’t going to come forth to reveal all of its secrets. However, Irish programmer and developer Steven Troughton-Smith took a close look at the hardware and software to determine it’s one huge Apple Watch monitored by Apple’s new T1 security chip. This chip is supposedly a variant of the S1 system-on-chip (SoC) processor used in Apple’s wearables.

In a nutshell, MacOS will send information about the current frame stored in memory (before it’s flashed on the screen) to the Touch Bar, which relies on a special version of WatchOS. This data is sent over an internal USB connection, and WatchOS will relay multitouch input events back to MacOS to render on the stored screen state before it’s displayed.

At one point, rumors suggested that the T1 chip would control the Touch Bar for power reasons outside the security features. Troughton-Smith believes that this is still possible, allowing the strip to remain functional when the processor and MacOS are in sleep mode, possibly even turned off. Overall, the chip is used to manage the security of input devices like Touch ID, the camera, the Touch Bar, and so on.

“From the file system, even though it’s a variant of WatchOS, it seems to be called e[mbedded]OS. Or just ‘eos’, sans marketing capitalization,” he writes. “The ‘Bridge’ code name seems to make a lot of sense, considering it’s bridging between the iOS and MacOS worlds in one product.”

The variant of WatchOS used in the Touch Bar supposedly can’t run stand-alone WatchOS apps. The Touch Bar itself is backed by a 25MB “ramdisk, a virtual hard drive stored in memory for booting up the OLED strip and controlling its hardware. The Touch Bar will also only be capable of handling one active program at a time, thus programs running in the background will be unable to take control of the bar.

The long and skinny on resolution

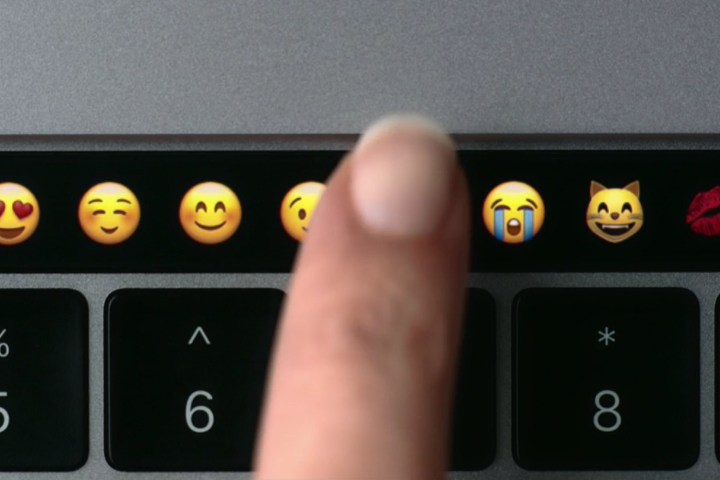

As for the actual hardware specs of the Touch Bar, it supports 10-point touch input. The bar replaces the typical row of function keys on the keyboard, so its physical size is roughly the same from front to back. Physically, the OLED strip measures 2,170 pixels wide and 60 pixels high. It’s a high-resolution Retina screen, so with UI scaling, it equals out to a perceived resolution of 1,085 x 30. This does not include the Touch ID sensor, which is technically part of the Touch Bar device.

To break the three regions down physically, the System Button has a dedicated 128 pixel area, the App Region has a dedicated area of 1,370 pixels, and the Control Strip has a maximum collapsed area of 608 pixels. These three areas are divided by two gaps measuring 32 pixels each. Apple goes even further with the app control design, stating that the default width between controls should be 16 pixels, the small fixed space should be 32 pixels, and the large fixed space should be 64 pixels.

For instance, the definition of a “fluid” layout in the App Region would be buttons measuring 144 pixels wide separated by gaps measuring 31 pixels wide. Or, in the case of document editing, a list for possible words would appear in a field measuring 890 pixels wide, a button measuring 144 pixels wide next to it, and two additional controls measuring 151 pixels wide.

“In a @2x graphic, one point equals two pixels. For example, an icon that’s 36px by 36px translates to 18pt by 18pt,” Apple explains to developers. “Append a suffix of @2x to your image names, and insert them into @2x fields in the asset catalog of your Xcode project.”

Some of the standard inputs and displays offered by the Touch Bar include buttons to initiate app-specific actions, toggles such as turning on underlining while creating a document, candidate lists that show suggested words, character pickers, color pickers, labels that describe a control (“add location” etc.), and popovers. This latter function appears as a stacked icon in a collapsed state, but when touched, will expand across the entire App Region to the right. Customers will also see sliders, scrubbers (like the ability to slide through open Safari tabs), segmented controls, and sharing service pickers.

Does Touch Bar pave the way for more iOS features and apps in MacOS?

Again, there’s indication that the T1 chip and Touch Bar are tied together outside the security envelope. As previously mentioned, Troughton-Smith theorizes that the Touch Bar could remain active when the processor and MacOS are in low power, perhaps even turned off. This could lead to iOS features and apps on MacBook Pros in the future.

“Perhaps someday it could run a higher class processor, like Apple’s A-series chips, and allow MacOS to ‘run’ iOS apps and Extensions, like iMessage apps, or manage notifications, system tasks, networking, during sleep, without having to power up the x86 CPU,” he speculates.

However, he also points out the link between the T1 chip and the Touch ID sensor. They’re both tied together like the way Touch ID is linked to the security chip in Apple’s latest iPhones. If the sensor goes out, both the sensor and the security chip will need to be replaced. And based on Apple’s Touch Bar guidelines, the Touch ID sensor appears to be physically part of the OLED device, so that indicates the whole section above the keyboard would need to be replaced if that’s the case.

One confirmation backing speculation that the Touch Bar can run without MacOS loaded was provided by Apple software engineering chief Craig Federighi. He acknowledged that the OLED strip provides visual function keys when running Microsoft Windows on a Mac. Apple provides what it calls Boot Camp, which allows users to install Microsoft Windows and boot either into MacOS or Microsoft’s platform on a Mac device.

Apple sets the Touch Bar rules

Apple now provides developers with an application program interface (API) and guidelines for developing Touch Bar support in their apps and programs. According to Apple, the Touch Bar is an input device, and not a secondary screen even though, technically, it is a screen.

“The user may glance at the Touch Bar to locate or use a control, but their primary focus is the main screen,” Apple states. “The Touch Bar shouldn’t display alerts, messages, scrolling content, static content, or anything else that commands the user’s attention or distracts from their work on the main screen.”

Some of the rules Apple is enforcing include concluding tasks on the Touch Bar that begin on the OLED strip, avoid using Touch Bar for replacing well-known keyboard shortcuts, avoid mirroring Touch Bar interactions on the main screen, provide controls that product immediate results, and more.

Editors' Recommendations

- Nomad’s new iPhone case and Apple Watch band may be its coolest yet

- These 6 tweaks take MacBooks from great to nearly perfect

- MacBook Pro 16 vs. MacBook Pro 14: The important differences

- The biggest threat to the MacBook this year might come from Apple itself

- Apple may stop updating one of its best Apple Watches this year