One of the problems that researchers at Stanford University have been trying to fix is the one users can sometimes find when switching focus from objects close to the player to those farther away. Since technically the two are the same physical distance from the eye, but further afield in the digital world, it can sometimes be a strain.

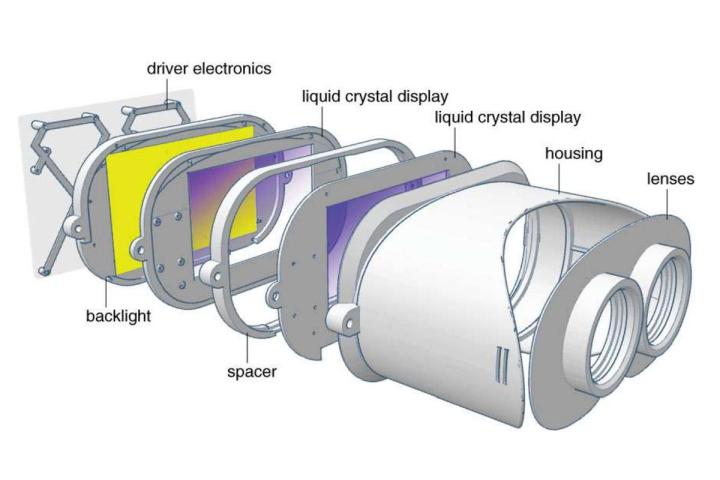

Researchers now believe they have a viable solution, though it could be a costly one: Layering LCD panels on one top of the other, with a space in-between — much like cartoonists did in days of old, with multi-plane camera rigs.

The front panel in their prototype, is translucent and renders nearby objects, while the rear, traditionally opaque, display handles objects at a distance. As Ars Technica points out, this creates some subtle, but necessary visual clues for your eyes to automatically see each panel differently, thereby creating real depth, augmented by the artificial, virtual distance.

This in turn can also give real-world, depth-of-field blurring for background or foreground objects — depending on what the player isn’t looking at — since there is a real physical distance between them.

While this does mean that eye tracking would be a less-useful feature, adding another layer of displays, when consumer headsets are already incorporating two, could make a VR headset utilizing this sort of set up much more expensive, heavier, and would require even more computational power to run effectively.

Still, it’s a novel solution.

Editors' Recommendations

- This new VR headset beats the Vision Pro in one key way and is half the price

- Your Quest 3 just got so much better — for free

- We have some bad news for Quest owners

- Apple’s Vision Pro could be coming to these countries next

- What’s behind customers returning their Vision Pro headsets?