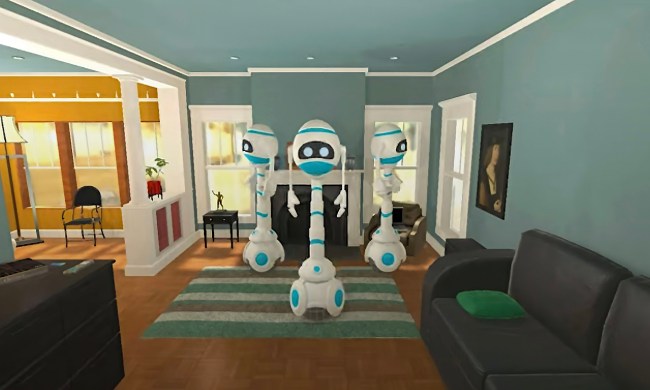

Picture a tray. On the tray is an assortment of shapes: Some cubes, others spheres. The shapes are made from a variety of different materials and represent an assortment of sizes. In total there are, perhaps, eight objects. My question: “Looking at the objects, are there an equal number of large things and metal spheres?”

It’s not a trick question. The fact that it sounds as if it is is proof positive of just how simple it actually is. It’s the kind of question that a preschooler could most likely answer with ease. But it’s next to impossible for today’s state-of-the-art neural networks. This needs to change. And it needs to happen by reinventing artificial intelligence as we know it.

That’s not my opinion; it’s the opinion of David Cox, director of the MIT-IBM Watson A.I. Lab in Cambridge, MA. In a previous life, Cox was a professor at Harvard University, where his team used insights from neuroscience to help build better brain-inspired machine learning computer systems. In his current role at IBM, he oversees a unique partnership between MIT and IBM that is advancing A.I. research, including IBM’s Watson A.I. platform. Watson, for those who don’t know, was the A.I. which famously defeated two of the top game show players in history at TV quiz show Jeopardy. Watson also happens to be a primarily machine-learning system, trained using masses of data as opposed to human-derived rules.

So when Cox says that the world needs to rethink A.I. as it heads into a new decade, it sounds kind of strange. After all, the 2010s has been arguably the most successful ten-year in A.I. history: A period in which breakthroughs happen seemingly weekly, and with no frosty hint of an A.I. winter in sight. This is exactly why he thinks A.I. needs to change, however. And his suggestion for that change, a currently obscure term called “neuro-symbolic A.I.,” could well become one of those phrases we’re intimately acquainted with by the time the 2020s come to an end.

The rise and fall of symbolic A.I.

Neuro-symbolic A.I. is not, strictly speaking, a totally new way of doing A.I. It’s a combination of two existing approaches to building thinking machines; ones which were once pitted against each as mortal enemies.

The “symbolic” part of the name refers to the first mainstream approach to creating artificial intelligence. From the 1950s through the 1980s, symbolic A.I. ruled supreme. To a symbolic A.I. researcher, intelligence is based on humans’ ability to understand the world around them by forming internal symbolic representations. They then create rules for dealing with these concepts, and these rules can be formalized in a way that captures everyday knowledge.

If the brain is analogous to a computer, this means that every situation we encounter relies on us running an internal computer program which explains, step by step, how to carry out an operation, based entirely on logic. Provided that this is the case, symbolic A.I. researchers believe that those same rules about the organization of the world could be discovered and then codified, in the form of an algorithm, for a computer to carry out.

Symbolic A.I. resulted in some pretty impressive demonstrations. For example, in 1964 the computer scientist Bertram Raphael developed a system called SIR, standing for “Semantic Information Retrieval.” SIR was a computational reasoning system that was seemingly able to learn relationships between objects in a way that resembled real intelligence. If you were to tell it that, for instance, “John is a boy; a boy is a person; a person has two hands; a hand has five fingers,” then SIR would answer the question “How many fingers does John have?” with the correct number 10.

“…there are concerning cracks in the wall that are starting to show.”

Computer systems based on symbolic A.I. hit the height of their powers (and their decline) in the 1980s. This was the decade of the so-called “expert system” which attempted to use rule-based systems to solve real-world problems, such as helping organic chemists identify unknown organic molecules or assisting doctors in recommending the right dose of antibiotics for infections.

The underlying concept of these expert systems was solid. But they had problems. The systems were expensive, required constant updating, and, worst of all, could actually become less accurate the more rules were incorporated.

The world of neural networks

The “neuro” part of neuro-symbolic A.I. refers to deep learning neural networks. Neural nets are the brain-inspired type of computation which has driven many of the A.I. breakthroughs seen over the past decade. A.I. that can drive cars? Neural nets. A.I. which can translate text into dozens of different languages? Neural nets. A.I. which helps the smart speaker in your home to understand your voice? Neural nets are the technology to thank.

Neural networks work differently to symbolic A.I. because they’re data-driven, rather than rule-based. To explain something to a symbolic A.I. system means explicitly providing it with every bit of information it needs to be able to make a correct identification. As an analogy, imagine sending someone to pick up your mom from the bus station, but having to describe her by providing a set of rules that would let your friend pick her out from the crowd. To train a neural network to do it, you simply show it thousands of pictures of the object in question. Once it gets smart enough, not only will it be able to recognize that object; it can make up its own similar objects that have never actually existed in the real world.

“For sure, deep learning has enabled amazing advances,” David Cox told Digital Trends. “At the same time, there are concerning cracks in the wall that are starting to show.”

One of these so-called cracks relies on exactly the thing that has made today’s neural networks so powerful: data. Just like a human, a neural network learns based on examples. But while a human might only need to see one or two training examples of an object to remember it correctly, an A.I. will require many, many more. Accuracy depends on having large amounts of annotated data with which it can learn each new task.

Burning traffic lights

That makes them less good at statistically rare “black swan” problems. A black swan event, popularized by Nassim Nicholas Taleb, is a corner case that is statistically rare. “Many of our deep learning solutions today — as amazing as they are — are kind of 80-20 solutions,” Cox continued. “They’ll get 80% of cases right, but if those corner cases matter, they’ll tend to fall down. If you see an object that doesn’t normally belong [in a certain place], or an object at an orientation that’s slightly weird, even amazing systems will fall down.”

Before he joined IBM, Cox co-founded a company, Perceptive Automata, that developed software for self-driving cars. The team had a Slack channel in which they posted funny images they had stumbled across during the course of data collection. One of them, taken at an intersection, showed a traffic light on fire. “It’s one of those cases that you might never see in your lifetime,” Cox said. “I don’t know if Waymo and Tesla have images of traffic lights on fire in the datasets they use to train their neural networks, but I’m willing to bet … if they have any, they’ll only have a very few.”

It’s one thing for a corner case to be something that’s insignificant because it rarely happens and doesn’t matter all that much when it does. Getting a bad restaurant recommendation might not be ideal, but it’s probably not going to be enough to even ruin your day. So long as the previous 99 recommendations the system made are good, there’s no real cause for frustration. A self-driving car failing to respond properly at an intersection because of a burning traffic light or a horse-drawn carriage could do a lot more than ruin your day. It might be unlikely to happen, but if it does we want to know that the system is designed to be able to cope with it.

“If you have the ability to reason and extrapolate beyond what we’ve seen before, we can deal with these scenarios,” Cox explained. “We know that humans can do that. If I see a traffic light on fire, I can bring a lot of knowledge to bear. I know, for example, that the light is not going to tell me whether I should stop or go. I know I need to be careful because [drivers around me will be confused.] I know that drivers coming the other way may be behaving differently because their light might be working. I can reason a plan of action that will take me where I need to go. In those kinds of safety-critical, mission-critical settings, that’s somewhere I don’t think that deep learning is serving us perfectly well yet. That’s why we need additional solutions.”

Complementary ideas

The idea of neuro-symbolic A.I. is to bring together these approaches to combine both learning and logic. Neural networks will help make symbolic A.I. systems smarter by breaking the world into symbols, rather than relying on human programmers to do it for them. Meanwhile, symbolic A.I. algorithms will help incorporate common sense reasoning and domain knowledge into deep learning. The results could lead to significant advances in A.I. systems tackling complex tasks, relating to everything from self-driving cars to natural language processing. And all while requiring much less data for training.

“Neural networks and symbolic ideas are really wonderfully complementary to each other,” Cox said. “Because neural networks give you the answers for getting from the messiness of the real world to a symbolic representation of the world, finding all the correlations within images. Once you’ve got that symbolic representation, you can do some pretty magical things in terms of reasoning.”

For instance, in the shape example I started this article with, a neuro-symbolic system would use a neural network’s pattern recognition capabilities to identify objects. Then it would rely on symbolic A.I. to apply logic and semantic reasoning to uncover new relationships. Such systems have already been proven to work effectively.

It’s not just corner cases where this would be useful, either. Increasingly, it is important that A.I. systems are explainable when required. A neural network can carry out certain tasks exceptionally well, but much of its inner reasoning is “black boxed,” rendered inscrutable to those who want to know how it made its decision. Again, this doesn’t matter so much if it’s a bot that recommends the wrong track on Spotify. But if you’ve been denied a bank loan, rejected from a job application, or someone has been injured in an incident involving an autonomous car, you’d better be able to explain why certain recommendations have been made. That’s where neuro-symbolic A.I. could come in.

A.I. research: the next generation

A few decades ago, the worlds of symbolic A.I. and neural networks were at odds with one another. The renowned figures who championed the approaches not only believed that their approach was right; they believed that this meant the other approach was wrong. They weren’t necessarily incorrect to do so. Competing to solve the same problems, and with limited funding to go around, both schools of A.I. appeared fundamentally opposed to each other. Today, it seems like the opposite could turn out to be true.

“It’s really fascinating to see the younger generation,” Cox said. “[Many of the people in my team are] relatively junior people: fresh, excited, fairly recently out of their Ph.Ds. They just don’t have any of that history. They just don’t care [about the two approaches being pitted against each other] — and not caring is really powerful because it opens you up and gets rid of those prejudices. They’re happy to explore intersections… They just want to do something cool with A.I.”

Should all go according to plan, all of us will benefit from the results.