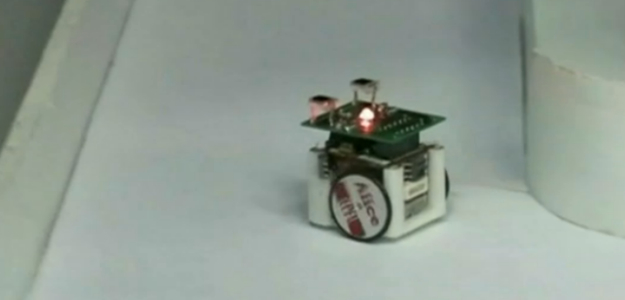

It may seem like one small step – literally – for robots, but a team of U.S. scientists claimed that they have created a robotic ant colony which may end up representing a giant leap for artificial intelligence. What makes the team’s accomplishment impressive is that the claim isn’t based on the robot’s aesthetics; In fact, these robots look nothing like ants, resembling mobile boxes with wheels more than anything else. The magic is in their behavior, and how the robots have learned to, well, learn from each other.

It may seem like one small step – literally – for robots, but a team of U.S. scientists claimed that they have created a robotic ant colony which may end up representing a giant leap for artificial intelligence. What makes the team’s accomplishment impressive is that the claim isn’t based on the robot’s aesthetics; In fact, these robots look nothing like ants, resembling mobile boxes with wheels more than anything else. The magic is in their behavior, and how the robots have learned to, well, learn from each other.

Simon Garnier of the New Jersey Institute of Technology’s Swarm Lab, which carried out the study, explains that “each individual robot is pretty dumb,” with only “very limited memory and limited processing power. By themselves, each robot would just move around randomly and get lost… but [they] are able to work together and communicate.”

The key to establishing this cooperation is the trails robots leave as cues for those who follow to respond to, just like ants in a colony. Whereas the ants’ organic trail is made of pheromone chemicals, the robots leave behind a trail of light that each bot detects thanks to a camera tracking all the movements. At regular intervals along the way, a projector attached to the camera would produce a beam of light, creating a virtual breadcrumb that would gain in strength with each successive pass of the camera and projector.

According to Garnier, each little cubed robot has “two antennae on top, which are light sensors. If more light falls on their left sensor, they turn left, and if more light falls on the right sensor, they turn right. It’s exactly the same mechanism as ants.” Using this method, the robots are able to pool their “experiences” – that is, the collective journeys of each individual machine – and navigate more efficiently using something called a positive feedback loop, the scientist explained.

“If there are two possible paths from A to B and one is twice as long as [the other], at the beginning, the ants [or] robots start using each path equally,” he said. But because the ant bots that travel along the shorter route do so in less time than their wrong-directioned cybernetic brethren, they can complete more journeys in the same time span than those taking the longer route, with more journeys meaning more light “breadcrumbs” left behind. The more breadcrumbs left behind, the more light picked up by the robots’ antennae, and therefore the more robots respond by taking the shorter route.

Such simple compilation is hardly the start of a robot uprising, but it is a significant step forward for artificial intelligences learning from their experiences, and we all know where that can lead.