Game developer Supermassive Games earned heaps of praise for its interactive, cinematic horror adventure Until Dawn, and followed it up with this year’s “spiritual successor” to that 2015 hit, The Quarry. The game puts players in control of a group of camp counselors who find themselves besieged by a variety of deadly threats — both human and supernatural — after getting stranded at the remote campground where they spent their summer.

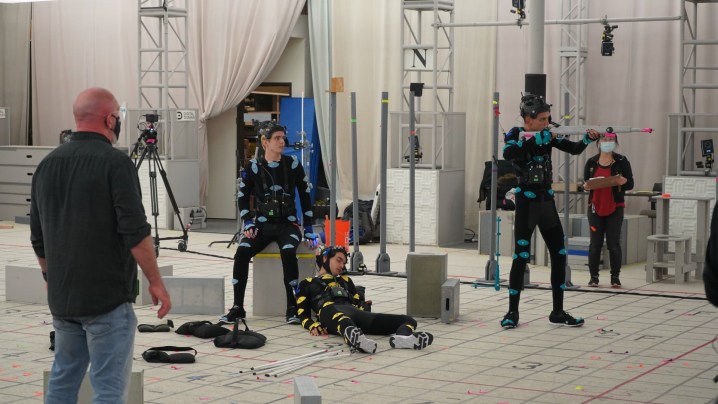

Like Until Dawn, The Quarry features a cast of familiar actors portraying the game’s characters, with a blend of cutting-edge, performance-capture systems and Hollywood-level visual effects technology allowing the cast to bring their in-game counterparts to life and put those characters’ fates in the hands of players. In order to bring the actors’ work on a performance-capture set into the game’s terrifying world, Supermassive recruited Oscar-winning visual effects studio Digital Domain, best known for its work in turning Josh Brolin into Thanos in Avengers: Infinity War.

Digital Trends previously spoke to Digital Domain’s team about the A.I.-driven Masquerade facial-tracking system they developed for Infinity War, which has received a significant upgrade since that 2018 film. It now returns to the spotlight in The Quarry, a game created using the studio’s improved Masquerade 2.0 system. Digital Domain’s Creative Director and VFX Supervisor on The Quarry, Aruna Inversin, and Senior Producer Paul “Pizza” Pianezza explained how a mix of groundbreaking technology, clever filmmaking techniques, and inspired performances from the game’s cast made Supermassive’s latest interactive adventure as exciting for its creative team as it is for players.

[Note: This interview will discuss plot points in The Quarry. Consider this a spoiler warning if you haven’t played the game yet.]

Digital Trends: Back in 2019, we discussed Masquerade, the facial-capture system Digital Domain developed for Thanos in Avengers: Infinity War. Your team created Masquerade 2.0 to do something similar with The Quarry, but with more characters and over a lot more screen time. What were some of the big steps along the way?

Aruna Inversin: Thanos was the most widely publicized product, because it’s Marvel, but a lot of our development [on facial-capture systems] started back in 2007 when we were working on The Curious Case of Benjamin Button. A lot of [our work on that film] was kind of figuring it all out. After that we worked on Tron: Legacy, so we’ve been pushing digital humans for feature films for a while. It really took a huge leap forward with Thanos and the Masquerade process, though.

What do Masquerade and Masquerade 2.0 allow you to do?

Inversin: It allows us to take any sort of head cam and then drive a digital character, a digital face, with it. For Thanos, it was interesting because we had a non-human delivery of the performance. And of course, there were animators that went in and tweaked it to make him Thanos. There’s still an underlying Josh Brolin performance, though. Since then, we’ve worked on efficiency on the real-time side — how to make Masquerade more real-time, more efficient, and more more cost effective. And in doing so, we came up with a couple of other products. Masquerade 2.0 is a combination of the head-tracking and all the other, ancillary developments that make it more efficient. There’s better automated eye tracking, more automated integration tracking of where the dots are on the face, more machine learning … It’s a tenfold increase in the amount of data we can deliver and interact with.

Thanos’ total on-screen time was 45 minutes. We’re now able to deliver 32 hours [of performance] across more characters for The Quarry.

What’s the general process like when you’re collecting data from actors on something like The Quarry?

Paul Pianezza: We captured 32 hours of footage, but to start out, we do training sets. We’ll take the actors and do some data scanning of them. And from that, we collect about 60 seconds of ROM (Range of Motion) data. Then we do a whole body scan. After that, we do more ROMs and capture more face shapes to establish a 1:1 likeness — how the face moves and all that kind of stuff. That’s our starting point. And then, when we go to shoot every day, we’ll do even more ROM captures as they perform, so the set we work from gets bigger and bigger over time.

It’s one thing to have all of that raw material, but what sort of challenges do you typically run into when trying to manipulate and edit it real-time for something interactive like a game?

Inversin: After we have all of the training sets, one of the challenges is that once you add an actors’ performance to the mix, they’re not just standing around and showing you how their face and body move. They’re running around, jumping, wrestling with werewolves, and doing all sorts of things. And in order to track that, we came up with new technologies that allow us to stabilize the head cam and capture motion unaffected by the head cam wobbling around, for example. Those cameras are pretty heavy, so we had to evolve the camera stabilization and tracking and the machine-learning system so that when a character runs, you see the face move with gravity as they run. That nuance in a real-time performance is something you don’t get if you capture an actor’s face separately from their body performance. We like to capture everything simultaneously so that the actors not only have that rapport with each other, but the motion in their faces corresponds to what they’re physically doing.

Pianezza: And the fun part is, when you’re capturing 32 hours of footage with these cameras, you find every possible way they can break.

Inversin: Right? Cables in front of the face, helmets flying off … Fortunately, we had a really transparent relationship with Supermassive. When we were on set and called out to Will [Byles, director of The Quarry] that a cable went in front of someone’s face or a head cam turned off or whatever, Will always had the foresight to know if it was something that needed to be reshot, or he’d say, “No problem. I’m going to cut around that.”

The subtle facial movements in the game are fascinating — like, when someone squints ever so slightly to suggest they’re skeptical, or moves their chin in a tiny way that suggests they’re nervous. How do you capture that level of subtlety and keep the characters out of the uncanny valley?

Pianezza: That’s one of those things we constantly talk about: The imperfections and the subtleties that make it real. Everything we captured was designed to do that. That’s why we have 32 hours, 4500 shots worth of material. And when you get the system dialed in with all of that material, you get all of those subtleties in the actors’ performances.

Inversin: One of the really great selling points of the system we’ve developed is that it recognizes that the subtle movements — those eye twitches and other things you saw in the game — are really important. They come directly from the actor. There’s no additional animation on top of them. So actors know their performance will be transferred to that digital character with a great level of fidelity. And it’s only going to get better over time.

So how do you handle the death scenes in the game? Do they change the way you capture performances or translate them?

Inversin: Well, first, who did you kill when you played? Who died on your watch?

Two counselors died on my play-through, Abigail (Ariel Winter) and Kaitlyn (Brenda Song), which I’m actually pretty pleased with, given my typical, first-run death toll in games like this.

Inversin: If it was Abi’s death scene in the boathouse, that was one of my favorites. Will gave direction to the actors by saying, “Okay, you need to stand here, Ariel, and pretend to fall to your knees and die. And Evan [Evagora, who plays counselor Nick Furcillo in the game], you need to jump at her, but not jump all the way, because you’re going to be a werewolf at a certain point.” And so we played that out on set. Ariel screams, drops to her knees, and keels over. And then Nick jumps, and stops, and that was it for the take. Will was like, “Perfect take. We’re done.” And then they’re both like, “Wait, what’s going to happen in the scene?” But after Abi’s head comes off and the camera goes back to her keeling over, that was actual mo-cap photography of her keeling over and dying. The expression on her face at the end, too, was an expression she made on set in her final throes. That expression is totally her. They just kind of held that frame. That day was fun.

Pianezza: People can die so many different ways, too, but you might only be able to do two takes in a short period because otherwise they’ll lose their voice from all the screaming. It was like, “Okay, I have to die again, so let’s take a break now so I can scream more later.” You had to plan for a bunch of different deaths, spread out over filming.

Inversin: There was a great period with Justice [Smith, who plays counselor Ryan], during chapter seven or eight, when he’s at the Hackett house and getting chased around. That day was a little insane. It was like, “Okay, Justice, you’re going to die like six times today and each one is going to be horrible.”

Keeping track of all the permutations of story arcs in a game like this feels like it could get overwhelming.

Pianezza: It was all Will. It was really impressive. For example, Dylan [played by Miles Robbins] can lose his arm at one point, either by chainsaw or by shotgun.

I went with the shotgun.

Pianezza: Okay, so you know that scene. When they were filming different scenes later, Will would be like, “Okay, you have to do this with your left hand now because you might have lost your right arm at this point.” He keeps track of everything in his mind.

How did you acclimate actors who might not be familiar with performance-capture technology or interactive cinema games like this to the process?

Pianezza: Digital Domain has such a long history of doing this kind of stuff, so we could show them examples of what Brolin did for Thanos, for instance. We told them, “You don’t have to overact. You’re in good hands.” So much of what we do in new media is nontraditional, so we do a lot of education, for clients, actors, or whomever. And in this case, you basically relay to them that it’s just like theater. There aren’t close-up, wide, and medium shots, because it’s all virtual. We have all of those cameras going at the same time, essentially.

Inversin: A lot of the cast was really interested in the process, and being transparent with them and knowing what could come out of it was really good. Will was great in directing them, saying, “This is like a stage play. We can put the cameras anywhere.” That repertoire and camaraderie between the actors, knowing they could get really close and talk and perform like in any stage play, was really important to capturing the characters. The prologue sequence with Ted Raimi, Siobhan Williams, and Skyler Gisondo was a great ensemble moment. The back-and-forth banter is so good, and that comes from getting those actors to perform like it’s a stage play.

How did they react when they saw the characters that came from their performances?

Pianezza: What’s really cool is, to put people at ease, we can attach their performance to their own body in the game in real-time, so if they move their arm, they see the character in the game moving their arm. Especially with actors who have never done this, there an aspect to that that’s very freeing, because you just need to give a great performance once. You don’t need to stop, relight the scene or reposition the camera, and do it all over again. You can just go, “Great performance! You’re done.” Because all we care about is the actor’s performance. We can figure out the cameras and lighting and such later.

Inversin: The ability to string takes together provides the freedom to shoot quickly and keep the actors and director and the team in the mindset of the performance, too. On a traditional set, you’re going back and forth, changing lights and cameras and so on, starting and stopping. Sometimes that takes people out of the moment. This allows everyone to really stay there.

Where do you see systems like Masquerade taking movies and gaming down the road? What sort of future for films and games is it creating?

Pianezza: I just want to see that interactive narrative space blow up. A lot of people on both sides — gaming and visual effects — have a love of both. And this blends them in such a great way. What’s really cool to me, though, is you create this stuff digitally, so the campgrounds at Hackett’s Quarry exist forever. If they want to revisit that world at some point, you can do that, because it’s digital. You don’t have to worry about getting a permit to shoot on-location again, or rebuild sets, or plan for night shoots, or whatever, because it’s all digital. Everything is there. Just go back and turn the computer back on.

Inversin: The opportunity for DLC, especially, is huge. The assets are all there, so as you go forward, you can do this kind of recurring thing, and with those same characters, you can amortize the cost over different episodes or chapters. You can have a horror series with each episode happening every couple of months, built around this band of characters that lives or dies and changes. So it’s easy to see the cinematic, interactive-narrative potential there, and it’s quite powerful. We’re just getting started.

Supermassive Games and 2K’s The Quarry is available now for Windows, PlayStation 4, PlayStation 5, Xbox One and Xbox Series X/S.

Editors' Recommendations

- How jellyfish and Neon Genesis Evangelion shaped the VFX of Jordan Peele’s Nope

- How Jurassic World Dominion’s VFX made old dinosaurs new again

- The comics, colors, and chemicals behind Ms. Marvel’s VFX

- How visual effects created Snowpiercer’s frozen world

- How visual effects made Manhattan a war zone in HBO’s DMZ