Just last month, Bill Gates made headlines across the web when he stated that he is “concerned about super intelligence” as part of a Reddit AMA. Gates’ trepidation regarding the technology came as something of a surprise to many, but he’s far from the only silicon superstar calling for caution. Should we be more concerned than we are about this sort of technology?

Concern in the face of a “super intelligence”

Speaking to MIT students in 2014, Elon Musk described artificial intelligence as “our biggest existential threat.” Last month, the Tesla and SpaceX CEO contributed $10 million to the Future of Life Institute, a Boston-based volunteer organization that works to nip threats to our species’ survival in the bud. Artificial intelligence is one of many perceived threats that the institute has its sights set on. Musk sits on the group’s board of advisers alongside Stephen Hawking, who himself has been quoted saying AI could “spell the end of the human race.”

Elon Musk describes artificial intelligence as “our biggest existential threat.”

With Gates, Musk and Hawking assembled, it might seem like the backlash against artificial intelligence is about to begin. But, while the three have uttered attention-grabbing warnings on the far-flung future of AI, they’ve been referring to a level of intelligence that isn’t on the table just yet. Bill Gates followed his concerns about the technology with a caveat that the having these machines take care of jobs for us “should be positive if we manage it well.”

Still, it seems that we have already set out on the road towards super intelligence. A “smart” phone or a “smart” television may seem like a small step forward, but it’s a clear indication of the direction that we’re headed. Systems are being built that let computers learn our usage habits and build on that knowledge to serve their purpose better. A host of different companies are putting this sort of technology to a broad range of uses, but few projects wear their status as an artificial intelligence quite as proudly as Microsoft’s Cortana.

Enter Cortana

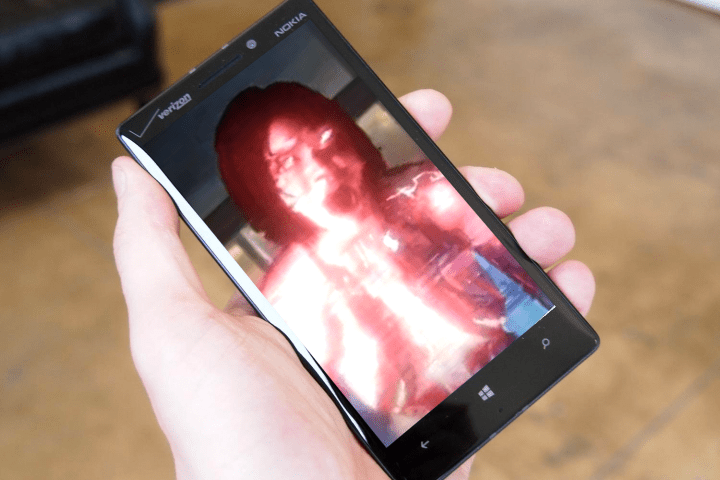

Debuting last year as a competitor to services like Siri and Google Now, Cortana has been endlessly described as a “virtual assistant” by its creators. Microsoft also claims the software “continually learns about its user” and that its designers hope it will eventually be able to interact with users in an “anticipatory” manner. All in all, it sounds like it could be the first piece of artificial intelligence that really splits opinion with the mainstream. It’s easy to see why these features could be very useful, but we can also imagine users shying away from such familiarity with their “virtual assistant.”

Building on the sense of personality that gave Siri a novelty factor with users, Cortana is intended to be thought of as a entity rather than just a program. While Apple encouraged people to call Siri by her name, Microsoft is going one step further; a friendly “Hey, Cortana” is the default method of calling the application’s attention. Alongside the many lines of humorous banter that have been recorded as responses for stock questions, it’s clear that Cortana is meant to be an intelligence that charms users as it learns about them.

Cortana is meant to be an intelligence that charms users as it learns about them.

While seeing this product come from the company that the concerned Bill Gates once co-founded might seem like a contradiction in itself, you only have to look at the name Cortana to have other alarm bells begin to sound. Cortana first appeared as an artificial intelligence in the Halo video games, and the same voice actress that played the role in those games has been tapped to voice the personal assistant. However, the way that the character has been portrayed in Halo hasn’t always made her out to be the sort of technology you would want in your pocket.

Could Cortana go “Rampant?”

The Halo mythos cribs a major concept known as “rampancy” from its creators’ earlier series, Marathon. The game’s idea of artificial intelligence is remarkably similar to the idea of a virtual assistant — AIs take on their own personalities, and grow more intelligent by collecting data and information from the world around them. However, that knowledge gradually fills up the available resources of the construct, and over time the AI will become “rampant.” An AI in a state of rampancy thinks of humans as its inferior, developing a delusional sense of its own power and intellect. This fate is inevitable for the video game version of Cortana, but hopefully the real-world version won’t meet the same end.

While Halo sees AI constructs relied upon for interstellar war as opposed to making lunch appointments, fans of the series might find it strange to see a character from the series being used as the basis for a real-world AI product. Within the video games, it’s made clear time and again that this sort of technology needs to be handled with care. Of course, that sort of authorial intent is soon thrown out of the window when a character like Cortana can be re-used for the purposes of brand awareness.

Admittedly, the stakes aren’t as high. If you keep a Windows Phone with Cortana on it for seven years, it’ll be out of date — but not rampant. However, it will have learned all the behaviors that you exposed to it over the course of that time; who you contact on a regular basis, where you’re likely to eat lunch on a Thursday, what your regular schedule is like. We’ve seen enough hacks and leaks in recent months to demonstrate just how careful we need to be with our data, but the conversational approach of this technology might lead users to let their guard down. Talking to a virtual assistant doesn’t feel like giving information away because it’s designed to be a casual experience, but it’s still collecting data like any other computer.

The academic perspective

Reality may not be as fantastic as the sci-fi idea of a mad computer gaining sentience and enslaving the human population, but some caution is still needed. We need to ask the same questions of AI that we should be asking of any piece of computer software. That’s the stance taken by Thomas G. Dietterich, a professor at Oregon State University and the president of the Association for the Advancement of Artificial Intelligence.

Dietterich lists bugs, cyber-attacks and user interface issues as the three biggest risks of artificial intelligence — or any other software, for that matter. “Before we put computers in control of high-stakes decisions,” he says, “our software systems must be carefully validated to ensure that these problems do not arise.” It’s a matter of steady, stable progress with great attention to detail, rather than the “apocalyptic doomsday scenarios” that can so easily capture the imagination when discussing AI. “In science fiction, the computer inevitably discovers some fundamental new physics or some magical new algorithm,” Dietterich says. “This is incredibly farfetched, but notice that this is another example of placing a computer system in a position where it has life-and-death control over people because of the vast resources at its disposal.”

Cortana’s rampancy will remain a fictional concept.

According to Prof. Dietterich, it’s unlikely that a computer system could vastly improve its own intelligence. Cortana’s rampancy, in other words, will almost certainly will remain a fictional concept. Similarly, the development of AI in general isn’t the runaway train that it can perhaps be presented as. He describes progress in the field as “slow and steady,” with the attention-grabbing breakthroughs that we’re starting to see hit the press now being the result of decades of work behind the scenes. “We hardly have any systems that can act on their own, even for brief periods of time,” he says. “Who knows what research and engineering issues will arise as we start to integrate the disparate parts of AI research.”

However, this isn’t naysaying on the part of Dietterich; quite the opposite. He maintains that artificial intelligence is already doing great work across a broad swathe of usage cases, and will only continue to do so provided we approach the technology with a measured, considered approach. “The benefits far outweigh the risks, particularly because there is no tradeoff,” he says. “We can obtain the benefits while avoiding the risks.”

As is often the case, the real issue here isn’t that technologies are being developed that can immediately threaten our existence; it’s that the way we implement those technologies that can lead to them being a great assistance, or a imminent danger. The idea of a “super intelligence” is still decades away, but the first wave of “consumer AI” carries with it its own risks. They’re not risks that will spell the immediate fall of society, but interface and privacy issues that could be catastrophic for an individual too reliant on a virtual assistant. For the time being, at least, we should probably be less concerned with the “what-if” doomsday scenario of artificial intelligence and more concerned with how today’s basic learning machines interact with their human users.

Editors' Recommendations

- Update Windows now — Microsoft just fixed several dangerous exploits

- Hey, Bingo? Steve Ballmer nearly changed Cortana’s name in last act at Microsoft

- Microsoft contractors reviewed Skype, Cortana audio with ‘no security measures’

- Windows 10 update to fix Cortana bug breaks some users’ start menus

- Microsoft contractors are listening to some Skype calls and Cortana commands