I just reviewed AMD’s new Radeon RX 6600, which is a budget GPU that squarely targets 1080p gamers. It’s a decent option, especially in a time when GPU prices are through the roof, but it exposed a trend that I’ve seen brewing over the past few graphics card launches. Nvidia’s Deep Learning Super Sampling (DLSS) tech is too good to ignore, no matter how powerful the competition is from AMD.

In a time when resolutions and refresh rates continue to climb, and demanding features like ray tracing are becoming the norm, upscaling is essential to run the latest games in their full glory. AMD offers an alternative to DLSS in the form of FidelityFX Super Resolution (FSR). But FSR isn’t a reason to buy an AMD graphics card, and DLSS is a reason to buy an Nvidia one even if it shouldn’t be.

Nvidia’s walled garden

Nvidia only offers DLSS on its last two generations of graphics cards — in particular, RTX 30-series and 20-series cards. Walling off features like this isn’t something new for Nvidia. For years, it restricted its G-Sync variable refresh rate technology to monitors that included a dedicated (and costly) proprietary module, instead of adopting the open-source FreeSync developed by AMD.

Similarly, many machine learning applications are built to run using Nvidia’s CUDA GPU computing platform, not the OpenCL platform that AMD cards use. Developers have fixed the problem in software libraries like TensorFlow, but there’s still a trend with these libraries: CUDA gets first priority.

That leaves us with DLSS, which is also a technology restricted only to Nvidia hardware. There’s a good reason why — DLSS uses an A.I. model that can only run on the Tensor cores on recent Nvidia graphics cards. Right now, AMD cards don’t have these dedicated A.I. accelerators, but it’s hard to imagine Nvidia taking them into consideration if they existed.

In fairness to Nvidia, the company has taken steps to break down its proverbial walls. For example, G-Sync now works with a range of FreeSync monitors that don’t include a dedicated module. The important thing to know is that Nvidia has traditionally developed new features with only its hardware in mind, while AMD usually takes an open-source approach.

That’s true for DLSS and FSR, too. The difference between DLSS and Nvidia’s other walled-off features is that it’s significantly better than FSR.

Performance parity, and why DLSS is too good to ignore

The AMD RX 6600 is the same price as the Nvidia RTX 3060, and across the wide range of games you could play on them, you can expect similar performance overall. Performance parity with Nvidia has long been the goal for AMD, and its most recent RX 6000 graphics cards mostly hit that mark. That’s good news in a time when GPUs are so hard to find — you have two options instead of one.

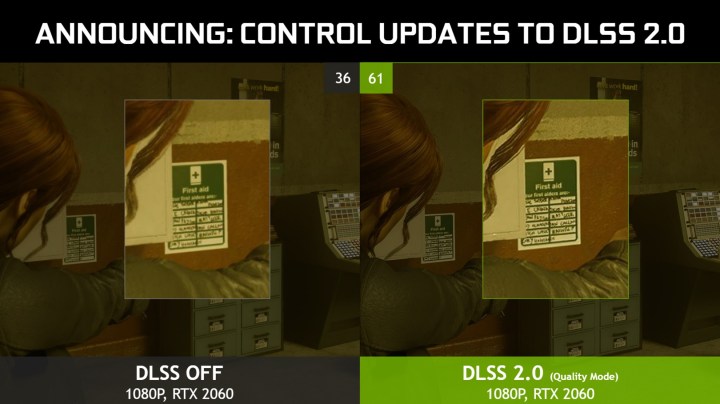

The massive asterisk is DLSS. When AMD announced FSR, it looked like an open-source competitor to DLSS that could run on AMD and Nvidia cards alike. In reality, it’s an upscaling tool based on dated tech that manages to increase frame rates, but at a significant cost to image quality.

DLSS doesn’t have that problem. Both DLSS and FSR accomplish the same goal by upscaling a low-resolution image to a high-resolution one by filling in the missing pixels. The difference is that FSR uses a baked-in algorithm with a sharpening filter while DLSS uses an A.I. model that’s been trained on what the final image should look like. Basically, DLSS has a lot more information to work with, and Nvidia graphics cards have the A.I. accelerators to take advantage of it.

Making FSR open source was an inclusive move for AMD, but it was also a compromise. DLSS is a reason to buy an Nvidia graphics card given its image quality, and even if AMD restricted FSR to its own platform, it wouldn’t be enough to compete with the feature set of Team Green. You can see that in the recent Back 4 Blood, where DLSS holds up much better than FSR (even if FSR offers higher frame rates overall).

To be clear, I’m not advocating for another walled garden — I don’t like the fact that Nvidia restricts DLSS to its platform, either, and as Intel’s XeSS supersampling feature shows, it’s possible to develop this tech in an inclusive way. The point is that Nvidia isn’t going to develop DLSS for other hardware, but AMD could have developed FSR to go toe-to-toe with DLSS while sticking with an open-source approach.

A day of reckoning on the horizon

With the best graphics cards available today, it’s hard recommending AMD when Nvidia has DLSS on the table. FSR was supposed to change that, but the image quality falls apart too quickly. The RX 6600 shows that DLSS is the determining feature when all else is equal.

But it may not stay that way for long. Intel is set to release its Arc Alchemist cards soon, which include XeSS. It works like DLSS, but Intel is also offering a general-purpose version that can run on a variety of hardware. AMD could have jumped on that opportunity but didn’t. It looks like Intel is filling the gap.

In the future, I hope to see AMD, Nvidia, and Intel reach performance and feature parity. At least then we don’t have one dominant graphics card maker resting on its laurels while the rest of the market tries to catch up. AMD has said it will continue working on FSR, and XeSS will be available early next year, so hopefully that shift is right around the corner.

Editors' Recommendations

- How I unlocked the hidden modes of DLSS

- New Nvidia update suggests DLSS 4.0 is closer than we thought

- Don’t buy a cheap GPU in 2024

- Nvidia DLSS is amazing, but only if you use it the right way

- AMD finally has a strategy to beat Nvidia’s DLSS