Under the guidance of Kassewitz, the Speakdolphin project focuses on recording and analyzing communication by dolphins, whales, and other cetaceans. The team uses cutting edge digital recording gear to capture the complete audio spectrum of dolphin vocalization and echolocation. These sounds are then analyzed to understand dolphin language. The overall goal of the project is to facilitate communication between humans and cetaceans.

Researchers as far back as Jacques Cousteau believe dolphins can “see” using sound by emitting high-frequency clicks that travel through the water and bounce off objects in the dolphin’s path. This echolocation information has been studied for decades, but recent observations by Kassewitz showed that dolphins not used in his experiments were able to identify objects from recorded dolphin sounds.

To understand how dolphins can see using sound, Kassewitz collaborated with English acoustics engineer, John Stuart Reid, who is the co-inventor of the cymascope. The cymascope is an instrument used to visualize sound by applying the principles of Cymatics, which uses sound vibrations to produce patterns on a surface that is covered by a thin layer of particles, paste or liquid. Kassewitz and Reid experimented with the dolphin sounds on the cymascope and produced startling results.

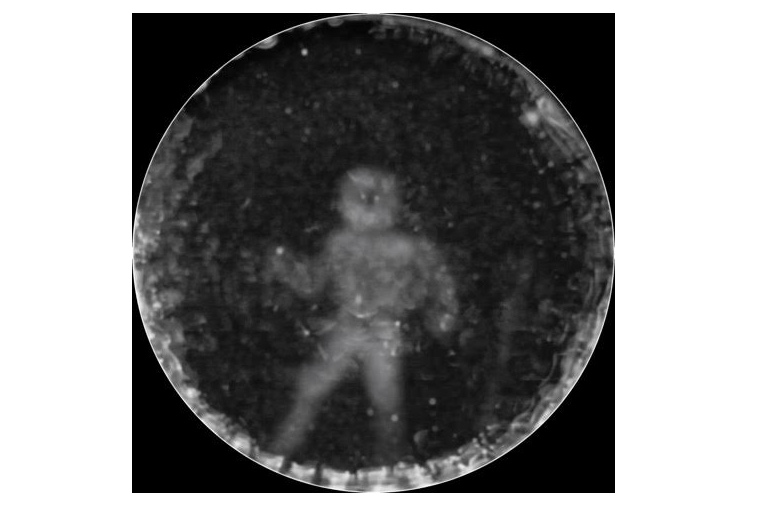

Kassewitz began by recording the echolocating sounds that dolphin makes when it is exposed to different objects, including a cube, cross, a rubber duck, and a human being. When Reid ran these sound files on the cymascope, the audio sequence produced different patterns and shapes that correspond with the image that dolphin saw. The result that caught Kassewitz’s attention was the one in which the dolphin saw the human. This particular sound clip generated a ghost-like image of a human being. Kassewitz then consulted with 3D Systems, a leader in 3D digital design and fabrication, to convert 2D cymascope image data into a 3D-printable file.

Kassewitz and his team are in the process of writing up their findings and plan to submit it for publication in a major journal in 2016 and, if accepted, will present their data at the XXXIV International Meeting for Marine Mammalogy. Though intriguing, these results will be subject to significant scrutiny as many within the scientific community consider the discipline of cymatics to be a pseudoscience.