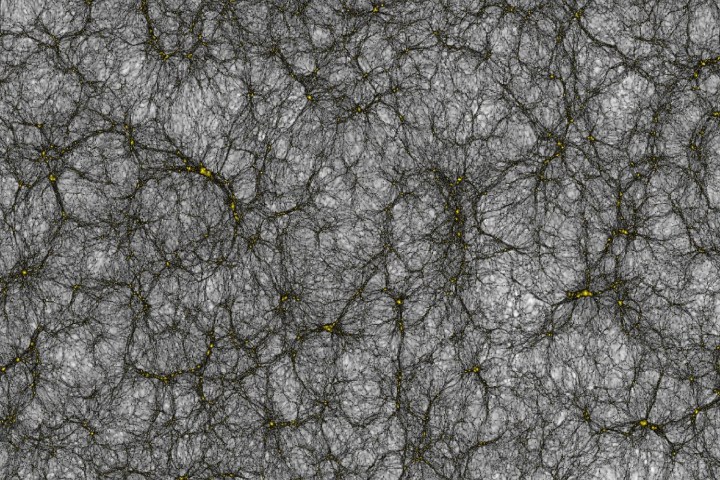

In fact, this hairy-looking Rorschach test is an image taken from a project at the University of Zurich in Switzerland — where researchers have used a supercomputer to create a simulation of our entire known universe — made up of some 25 billion virtual galleries. Stretching a billion years across, the map shows how dark matter is distributed in space, with the yellow clumps showing dark matter and the white areas being the cosmic void, aka the lowest density regions in the universe.

The resulting catalog will be used to calibrate experiments on board by the Euclid Consortium, a collaboration with more than 1,000 scientists in the world, whose aim is to map out billions of galaxies in the cosmos and decipher the nature of dark energy. The information will ultimately be used for researching the universe with the Euclid satellite, which will be launched into space in 2020.

“The techniques we have developed are unique: A fast multipole method for gravity that works on thousands of nodes in parallel, and the use of graphics cards to accelerate the calculation,” Romain Teyssier, a professor of computational astrophysics, told Digital Trends. “Because of these two innovations, our code has achieved the largest simulation so far of the entire observable universe, featuring 2 trillion digital particles. We used the Piz Daint supercomputer of the Swiss national supercomputing center, which is made of more than 5,000 GPUs. The unique combination of our innovative code and this world-leading machine is really making this result exciting.”

Executing the code took 80 hours for everything to compile. To put that number in perspective, the Piz Daint supercomputer — a Cray XC30 system — can compute in one day more than a modern laptop could compute in 900 years. Theoretically, that means that trying to generate this project on your run-of-the-mill notebook would take roughly three millennia.

The work is described in a paper published in the journal Computational Astrophysics and Cosmology.

Up next? Things could potentially get even more exciting. “Our goal is to prepare the next simulations, and include more physics like massive neutrinos, or simulate a universe with different laws of gravity,” Teyssier said.