There’s something broken about much of social media. While the number of users increases at an astronomical rate, and there’s no denying its power when it comes to disseminating messages and information, social media doesn’t necessarily embody the best aspects of socializing. In fact, for something with billions of users, it can be downright insular at times. This, in turn, can lead to the polarized world that Eli Pariser first identified in his book The Filter Bubble.

But there could be a fix for this fundamental issue. Researchers from Denmark and Finland have created a new algorithm they believe gives a glimpse at how social media could — and maybe should — work. It’s designed to pop the filter bubbles, and expose people to more diverse content.

“Typically, the aim of a social media platform would be to maximize user engagement,” Esther Galbrun, a senior researcher in data science at the University of Eastern Finland’s School of Computing, told Digital Trends. “That is [to] maximize the time people spend on the platform, since that might be turned into revenue, through advertisement for instance. Besides promoting incendiary content or clickbait, strategies to keep the users engaged might include providing them with more content they are likely to enjoy. That means personalizing the content by building profiles of the users, keeping track of what they have enjoyed and shown interest for, and trying to provide them with more of the same. This [can] also involve encouraging interactions with people who share similar points of view.”

The filter bubble problem

Personalization is, in most cases, good. The barista who knows your coffee order, the music algorithm that plays you songs it knows you either like or are likely to like, the news feed that shows you only the stories that appeal to you — all of it flatters the individual. It saves time in a world in which we somehow seem to have less time than ever despite hundreds of time-saving devices.

However, when it comes to this kind of personalization on social networks, the problem is that ideas too often remain unchallenged. We surround ourselves with people who think like we do, and this leads to enormous blind spots in our world view. That’s an issue because, as most people can agree, social media has moved beyond somewhere we go for dank memes and our friends’ baby pictures. At its best, social media platforms promise (even if they don’t always deliver) a way to help citizens stay informed and participate in the public sphere. It’s therefore essential that we’re exposed to information that doesn’t simply align with our own personal mythologies. It should be a marketplace of ideas, not a groupthink monolith.

This new research — which, in addition to Galbrun, was carried out by researchers Antonis Matakos, Cigdem Aslay, and Aristides Gionis — seeks to create an algorithm that maximizes the diversity of exposure in a social network. An abstract describing the work notes:

“We formulate the problem in the context of information propagation, as a task of recommending a small number of news articles to selected users. We take into account content and user leanings, and the probability of further sharing an article. Our model allows us to capture the balance between maximizing the spread of information and ensuring the exposure of users to diverse viewpoints.”

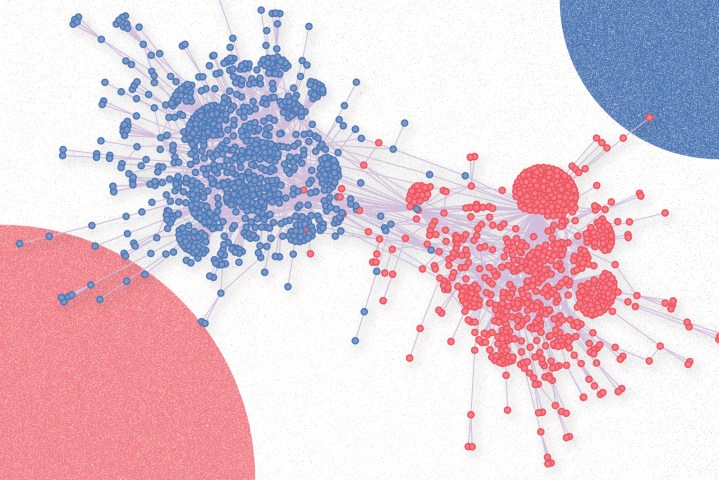

The system works by giving numerical values to content on social media and users, based on how they rank on the ideological spectrum — for instance, whether they are left- or right-wing. The algorithm then looks for social media users who could optimally spread this content with maximum effectiveness, thereby increasing the diversity scores of users.

As the researchers note in their paper, the challenge can “be cast as maximizing a monotone and submodular function subject to a matroid constraint on the allocation of articles to users. It is a challenging generalization of the influence-maximization problem. Yet, we are able to devise scalable approximation algorithms by introducing a novel extension to the notion of random reverse-reachable sets. We experimentally demonstrate the efficiency and scalability of our algorithm on several real-world datasets.”

Rethinking social media

One big challenge with anything like this, of course, is that it threatens to make social media less compelling. Social media companies probably aren’t trying to make fake news and filter bubbles a thing for political reasons; they’re just looking for content that makes people stay longer and click more. As a result, meddling with this formula — even if it’s for the public good — could make people spend less time on these websites and apps. Good for people, perhaps. Bad for companies.

“This is one of the main challenges,” Galbrun said. “To diversify the content users of the network are exposed to, without bombarding each user with exogenous recommendation, we still need to rely on users sharing the content, so it can propagate further across the network. If we recommend to a user content that presents an opinion diametrically opposed to his, his exposure will be diversified, but he is very unlikely to share the content with his contacts — and that will not help diversify the exposure of other users in the network. So we need to find a balance between how different the opinion represented is from that of the user, and how much this difference reduces the chances that it will be spread further.”

This paper, published in the journal IEEE (Institute of Electrical and Electronics Engineers) Transactions on Knowledge and Data Engineering, and recently highlighted by IEEE Spectrum, is just one method where social media networks could change the way they operate to encourage this kind of diversity. There is, of course, no guarantee that this will happen — and it’s worth noting that this is an independent piece of research that’s not been carried out by any of today’s social media giants.

Nonetheless, it does represent a crucially important illustration of one of the big problems that needs to be solved. Too often, social media is viewed as one of the great ills of modern society. There is some truth to that, but it also has the possibility of being a great benefit to civilization as well, opening people up to new perspectives and experiences outside of themselves. The question is how to reconfigure it so that it lives up to those ideals.