The 3D glasses correct a pair of offset, polarized images to create a sense of depth. Home 3D, however, uses what’s called an automultiscopic display. The display is actually three (or more) images, but those images are presented in a slightly offset way that looks different when the screen is viewed from different angles. That allows the brain to see a coherent image with depth information, creating a 3D effect without the glasses.

Automultiscopic displays have already allowed for glasses-free TVs. While the display type recently introduced is promising, it requires creating a 3D video using 30 different cameras. The unusual format creates a sort of chicken-and-egg problem: Producers aren’t going to create this 30-camera content when no consumers actually own a automultiscopic TV, but no consumers are actually going to buy a automultiscopic TV when there’s no content being produced for it. Companies like Toshiba already have glasses-free displays on the market, but they’ve failed to grow in popularity because of limited content.

What the latest MIT/CSAIL research does is to create a way to convert existing, glasses-required 3D content into the proper format for automultiscopic displays (the study is based off a similar work that focused on glasses-free viewing at movie theaters last year). By converting content that already exists into the new glasses-free format, automultiscopic TVs could actually wind up in homes since it only requires some software to convert content to the new format. That’s why when YouTube announced a new 180-degree format, they launched a way to view the content and, with manufacturing partners, a way to create the content at the same time.

“Automultiscopic displays aren’t as popular as they could be because they can’t actually play the stereo formats that traditional 3D movies use in theaters,” said Petr Kellnhofer, lead author on the Home3D research. “By converting existing 3D movies to this format, our system helps open the door to bringing 3D TVs into people’s homes.”

The MIT/CSAIL study isn’t the first to try computerized conversions to the new format, but reverses some of the current limitations. Phase-based rendering is a quick and accurate method for converting to the video type, but it can’t handle every type of 3D image. A technique called depth image-based rendering can handle those types of images, but is low-resolution and loses finer details like in transparent objects or motion blur.

The new software uses AI to mix the two conversion methods, creating a high-resolution 3D result without the glasses and without the limitations of earlier methods. The software can convert videos in real-time, running on a graphics processing unit (GPU), which means that the program could run off existing gaming devices like an Xbox or PlayStation, effectively mixing existing videos with existing hardware through software conversion. Future media players or smart automultiscopic TVs could integrate a GPU to enable the conversion as well, the research group says.

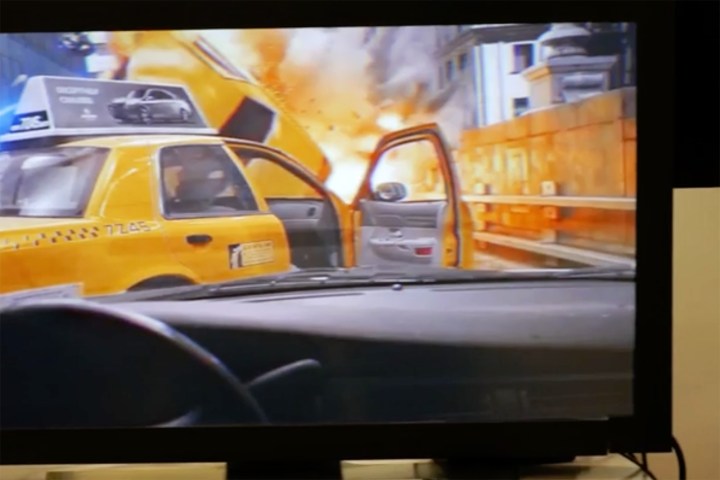

When Home3D-converted movies including The Avengers and Big Buck Bunny were presented to a study group, 60 percent of participants said the quality was better than existing 3D videos.

As we mentioned in our DT10 series on the future of the television, glasses-free 3D TVs will be a future technology manufacturers tap into, and this research backs up this trend. While the new method brings glasses-free home TVs closer to reality, the researchers say the current program does create some ghosting, or offset images, an issue the team plans to continue to refine through software.

Editors' Recommendations

- You Asked: 3D VR, QDEL technology, and TV size vs. quality

- Samsung, Google are attacking Dolby Atmos’ monopoly on 3D sound, and it’s going to get ugly

- 3D printed cheesecake? Inside the culinary quest to make a Star Trek food replicator

- AMD is bringing 3D V-Cache back to Ryzen 7000 — but there’s a twist

- AMD’s revolutionary 3D V-Cache chip could launch very soon