New research from the Massachusetts Institute of Technology could help robots get a literal grip.

Two research groups from the Computer Science and Artificial Intelligence Lab (CSAIL) today shared research to improve grippers in soft robotics, which unlike traditional robotics, uses flexible materials.

The technology could lead to robotic hands that are not only able to grasp a variety of items but also sense what the object is and even twist and bend it.

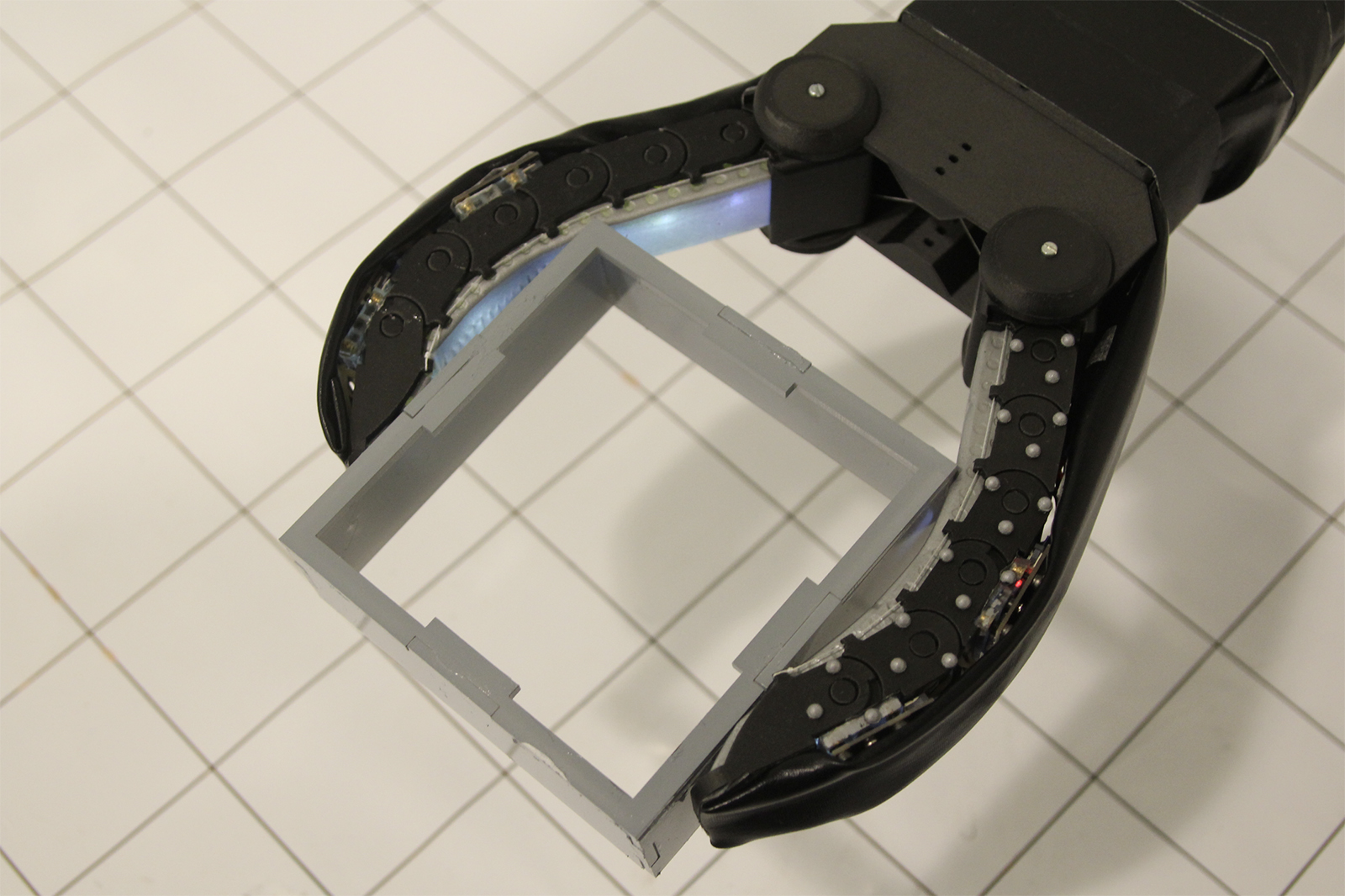

Using a method called GelFlex, one research group embedded cameras in the “fingertips” of a two-fingered gripper, allowing the robotic arm to better grasp different shapes, from large items like cans to thin objects like a CD case. The researchers found the GelFlex had an accuracy rate of more than 96% at recognizing different shaped objects to grasp.

Using a transparent silicone for the finger, the group embedded cameras with fisheye lenses. By painting the fingers with a reflective ink and adding LED lights, the embedded camera could see how the silicon on the opposite finger responded to the feel of the object. Using specially trained artificial intelligence, the computer used data on how the finger bent to estimate the shape and size of the object.

“Our soft finger can provide high accuracy on proprioception and accurately predict grasped objects, and also withstand considerable impact without harming the interacted environment and itself,” said Yu She, the lead author of the paper. “By constraining soft fingers with a flexible exoskeleton, and performing high-resolution sensing with embedded cameras, we open up a large range of capabilities for soft manipulators.”

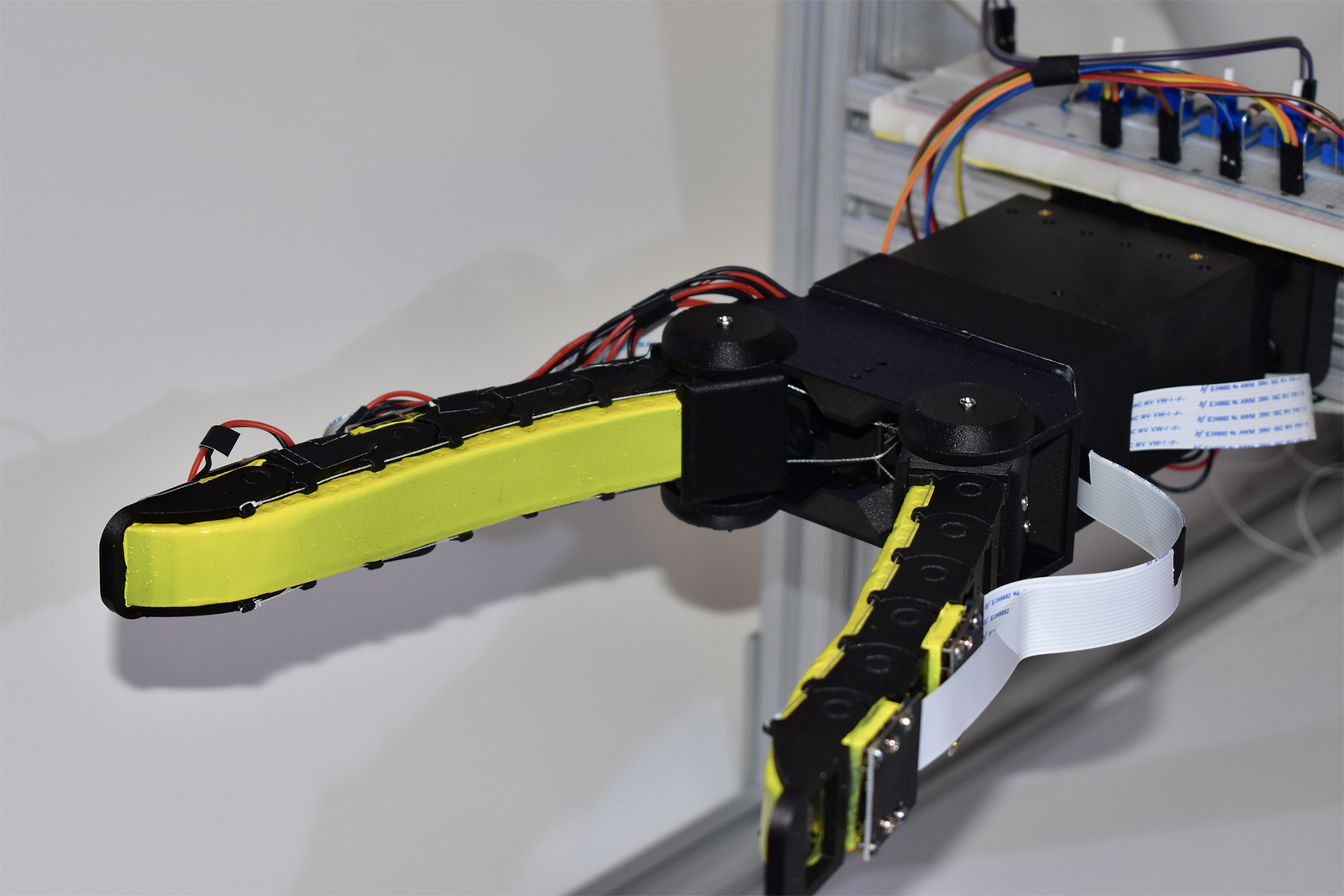

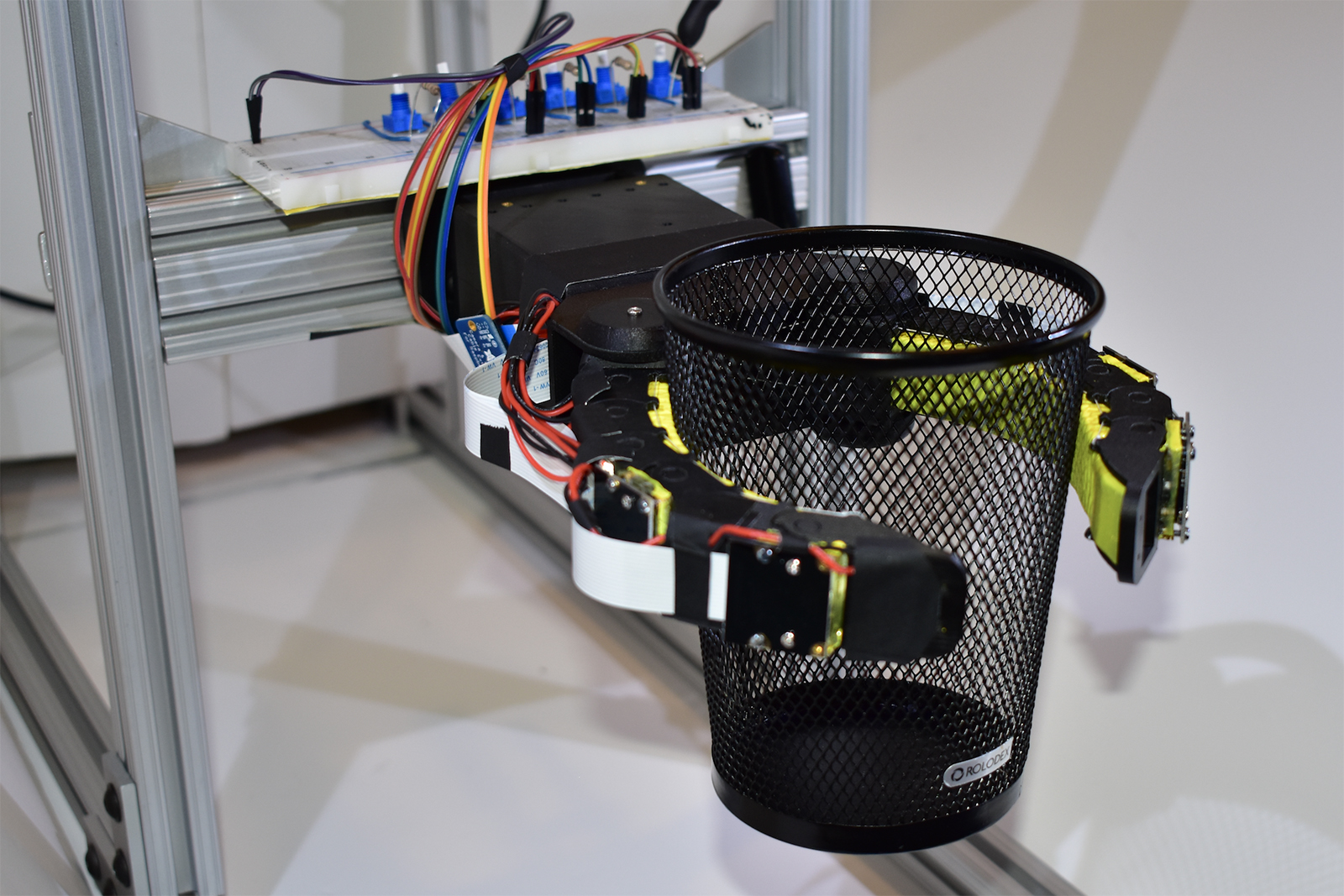

Another research team developed a system of sensors that, through touch, allowed soft robotic hands to correctly identify what it was picking up more than 90 percent of the time. The project gives a cone-shaped gripper, created from earlier research from MIT and Harvard, tactile sensors. The team is lead by postdoc Josie Hughes as well as professor and CSAIL director Daniela Rus.

The sensors are miniature latex “balloons” connected to a pressure transducer or sensor. Changes in the pressure on those balloons are fed to an algorithm to determine how to best grasp the object.

Adding the sensors to a robotic hand that’s both soft and strong allows the gripper to pick up small delicate objects, like a potato chip — and also classify what that item is. By understanding what the object is, the gripper can correctly grasp the object, rather than turning the potato chip to crumbs.

While having a robot feed you potato chips sounds cool, the research is likely destined for manufacturing. Further research, however, could potentially lead to robotic “skin” that can sense the feel of different objects.