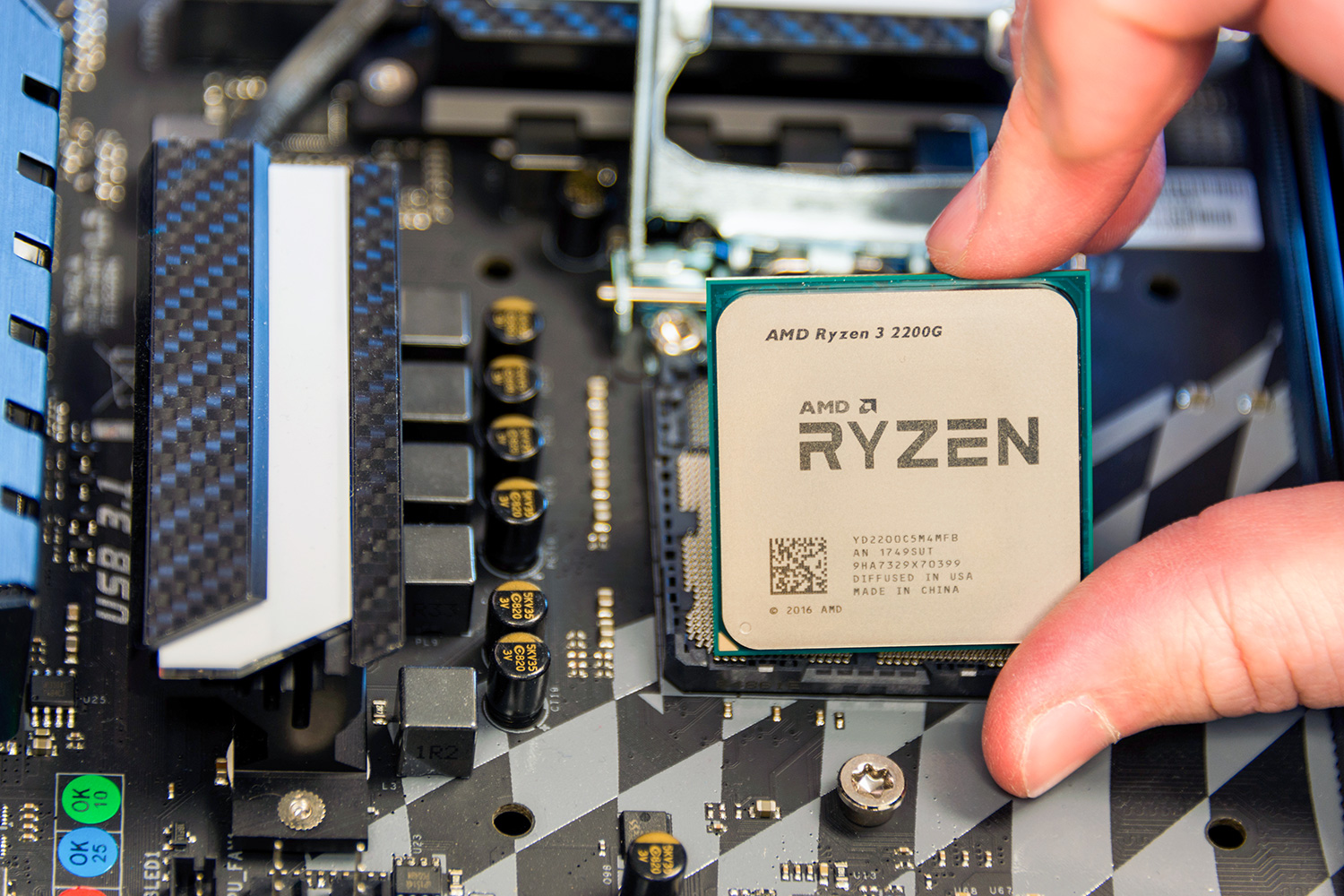

But what is an APU, and is it really any different from what Intel offers with its processors? The core concept of an APU is blessedly simple: An APU combines the functionality of a CPU and a GPU, two pieces of hardware found in basically any computer.

Two halves make a whole

The central processing unit, or CPU, carries out all the instructions for computer programs. Every action you take on your computer, whether running a game or simply typing a letter, must go through the CPU.

The graphics processing unit, or GPU, is a piece of hardware that allows a computer to render images quickly. Creating 3D images often involves complex processes like rendering polygons, mapping textures, and using complicated equations involved in animation. Offloading these to dedicated hardware makes 3D images much quicker to produce.

By integrating the CPU and GPU into a single unit, the APU produces a better transfer rate between the two, allowing them to share the burden of processing tasks. This also allows the APU to complete tasks while using less power than a standard CPU and graphics card setup and ensures a certain base level of graphical capability, which makes the overall user experience better. Most importantly, it means you don’t have to purchase a separate graphics card, which drastically lowers the overall price of your PC.

None of this is really any different from what Intel does with its CPUs though, even if AMD likes to call its chips that feature both cores something a little different. Most of Intel’s recent architectures from its seventh and eighth generations, combine CPU and GPU functionality on a single die. Essentially, any modern processor you purchase these days will be an APU, even if it doesn’t bear the name. That doesn’t, however, mean they’re all made equal.

But are they worth it?

Although Intel chip buyers will almost always get themselves an on board graphics die whether they want one or not, AMD buyers have a choice. Do they buy a dedicated AMD CPU — preferably from the latest generation of Ryzen processors — or opt for an APU, like the new Ryzen with Vega chips? If you’re a gamer, even on a modest budget, you’re much better off opting for one of the best CPUs with a dedicated graphics card. Although that option is more expensive, the performance offered by both an independent processor and stand-alone video card is much better.

APUs have their place, perhaps with non-gamers and those on extremely low-budgets benefiting from the lack of a need for a dedicated graphics card. However, we’ve yet to see an APU that

Editors' Recommendations

- AMD’s upcoming APUs might destroy your GPU

- AMD Zen 5: Everything we know about AMD’s next-gen CPUs

- Does RAM speed matter for PC performance?

- Best tools to stress test your CPU

- What is an RSS feed? Here’s why you should still use one