“Music is the carrier for our ‘medicine,’” Han Dirkx, the CEO of AlphaBeats, cryptically told Digital Trends in an interview over Google Meet during CES 2021. AlphaBeats’ “medicine” is an algorithm derived from research into neurofeedback that’s injected into the music we listen to and affects our brain waves in such a way that our brain is trained to relax more effectively.

Think this sounds like hokum? Research into the efficacy of neurofeedback is ongoing, but what makes AlphaBeats method so interesting is it’s entirely nonintrusive, meaning it doesn’t require you to put on an uncomfortable headset, or get plugged into a special device.

“Some people do yoga or meditation,” Dirkx explained, “but in our case you just lay down and listen to music.”

Listen up

Although simply listening to music can have a relaxing effect on its own, Dirkx says AlphaBeats’ algorithm promises to boost its effectiveness in calming your mind by three times compared to just sitting down and listening without any algorithmic tweaks. How does it work? By rewarding or punishing your brain by varying how your music sounds.

“We tweak the frequencies of the music, just like using a graphic equalizer. In essence, we just tweak the high tones and the low tones, so you don’t get frustrated because the quality of the music decreases,” Dirkx told me, adding that the effect is only subtle.

AlphaBeats’ algorithm is based on understanding alpha and beta wave activity in the brain. Beta waves show alertness and stress, while alpha waves show when you’re in a relaxed state, so when the alpha wave activity is increased, AlphaBeats “increases” the quality of the music to reward your brain. When stressful beta wave activity increases, AlphaBeats tweaks the music in a negative way.

“Research shows if we reward your brain in that way, we are able to decrease stress levels,” Dirkx said. “It takes 10 minutes a day for four weeks, and people were three times more relaxed than they were when they started, compared to those not using the algorithm. It doesn’t matter what kind of music you listen to, what we do is multiply the effect on a personal level. You hardly hear the difference between the changes, a little less bass and treble, but your brain hears the difference.”

No special hardware needed

This would normally be the part where we show you a devilish-looking headset covered in electroencephalography (EEG) sensors — which are used to monitor brain activity — but there’s no need. Although AlphaBeats did explore this option, it has instead focused on the software side.

“When we started in 2019, we had the algorithm that uses biosignals to change the audio, and we also had a prototype headset with EEG sensors on it,” Dirkx recalled. “We had to make a decision, and looking at the wearable market, it was really too late to get into it, so we decided to focus on the algorithm and see how we can unwind the brain using that.”

The result is an algorithm that works on any phone and with any headphones. However, to ensure the app can understand how your body is reacting to the music, it looks at two other biometric data points. Dirkx explained more:

“We started with EEG, but we found out two other signals are also important to us. The first is breathing, and you can put the phone on your chest to measure breathing frequency. The second and most important is Heart Rate Variability (HRV), which is measured by most heart rate sensors on smartwatches and wearables, and it can be used to define your stress level. At the moment, we are developing our beta app with HRV input, and are working with a company where it is measured just by putting your finger over the camera lens on your phone.”

Biometric signals provide crucial data, but Dirkx explained why it’s even more important for the app to know how you actually feel before and after using AlphaBeats.

“Because everyone deals and notices stress in different ways, we measure it not only through biosignals, but also using questions [adapted from the widely used Perceived Stress Scale (PSS) questionnaire] about how you feel before and after. This allows us to adjust the algorithm for you personally,” he said.

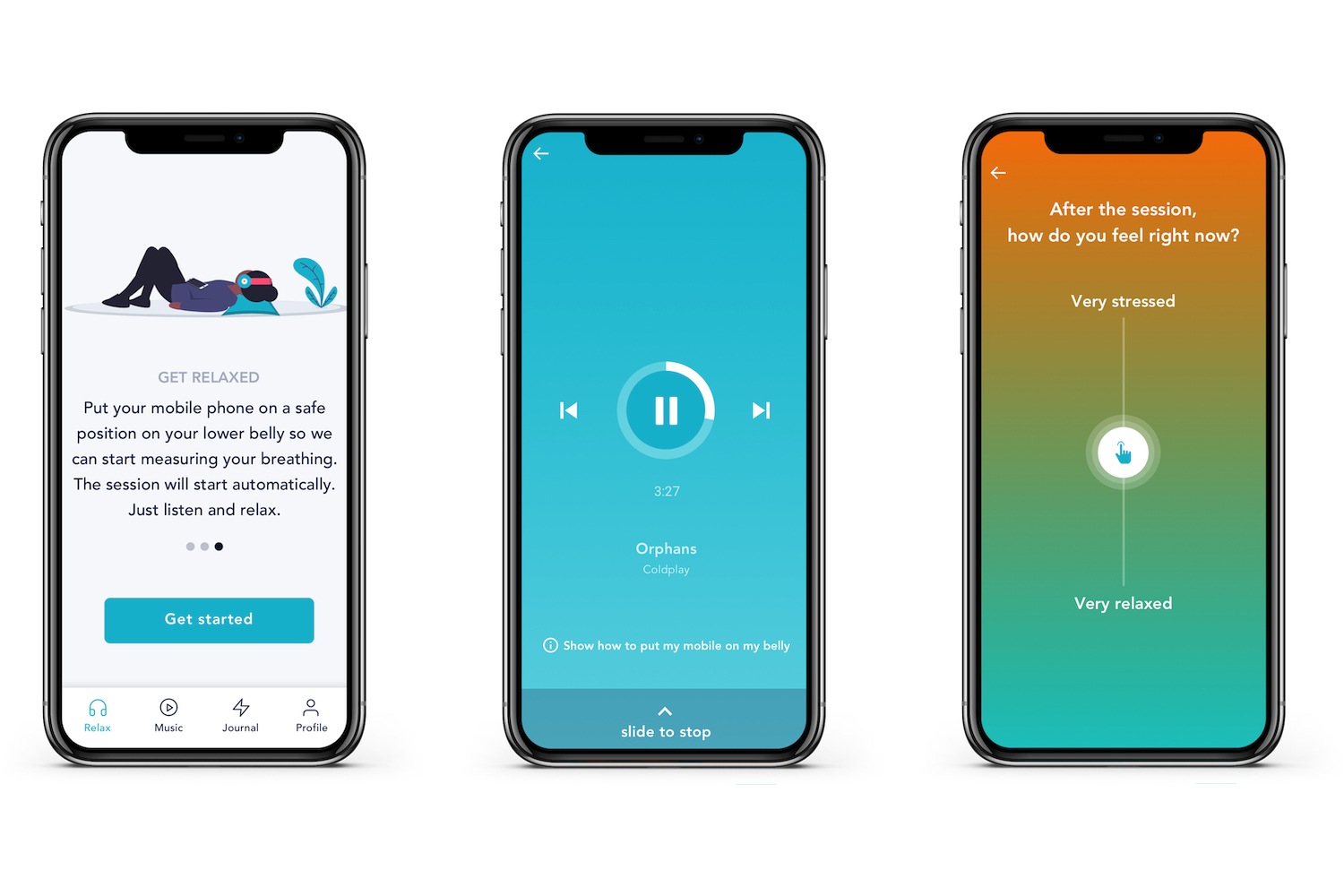

Dirkx said one of the biggest challenges behind adoption is getting people to change their behavior, so rather than the multiple official questions on the PSS test, the AlphaBeats app uses a slider system to establish the way you feel, ensuring using the app doesn’t become too time-consuming.

Software and hardware integration

Apart from getting people to use AlphaBeats on a regular basis, there are other challenges in making the app, from getting access to the required biometric data to manipulating the music files on your phone. Wondering why wearables like the Apple Watch with the built-in heart rate sensor haven’t been mentioned yet? AlphaBeats needs to team up with manufacturers, rather than just relying on integrating a Software Development Kit (SDK) into the app.

“The challenge is we need a 10-minute constant output from the heart rate sensors, and HealthKit only provides a single result,” Dirkx said.

This means the company needs greater access to the hardware, and it’s a similar story with the music side. AlphaBeats is beta testing with Spotify and Deezer streaming services at the moment, but the SDKs pose a problem for the commercial release.

“Adjustment of the equalizer is usually locked out of streaming service SDKs.” Dirkx revealed, adding the startup needs, “cooperation with all these companies,” to make the app more usable for more people.

How can you get involved?

The company is exploring several ways to get its algorithm into people’s ears. It’s not looking at hardware, but is considering partnerships with wellness companies that have an existing user base and are focused on destressing and relaxation. The first way we use AlphaBeats’ technology will likely be through a partner app, outside of a beta app test that you can apply to be part of now.

The beta app, which will be ready during the second quarter this year, is important to AlphaBeats to gather data. It will be iOS only at the start, with Android to follow later, and by the end of the year, it wants data collected from around 10,000 people. After this, Dirkx said he hopes for a commercial release in some form by the end of the year, or early in 2022.

With more data and a greater understanding of the effect of music on your brain, Dirkx said that in the future, the app may be able to see which songs relax you the most, and then potentially make recommendations on what to listen to and when, based on your previous reactions. The algorithm may also be tuned to work in the opposite way and increase focus.

This is all in the future, though. For now, when you strip away all the tech and science, at its heart, AlphaBeats really does sound as simple as putting on your headphones and pressing play. Despite this simplicity, getting any benefit will not be a quick fix.

“Like mediation and yoga,” Dirkx pointed out about the algorithm, “AlphaBeats requires an effort.”

If the result is to feel more relaxed just by listening to music you like, then 10 minutes a day doesn’t sound like too much of a chore.