Siri is one of the best virtual assistants out there, and it’s available on almost all Apple devices, including iOS and MacOS. However, Siri is only as useful as the operator, which is why you need to learn the tricks to getting the most out of it.

- Activating Siri

- Commands for calling, voicemail, messages, and email

- Commands to control your device’s setting

- Commands for currency conversions, calculations, and timers

- Commands for setting appointments, alarms, lists, and reminders

- Navigating and travel commands

- Search commands

- Entertainment, sports, and music commands

- Using Siri with your HomePod and Intercom

- Using Siri with HomeKit

- Using third-party apps

- Fun stuff

The commands you use may get the job done, but they may not be the most efficient way to use Siri. We teach you about commands that can help you with everything from doing calculations to managing your device’s settings.

Activating Siri

If you can’t talk to Siri, the feature may be turned off. To turn Siri on, go to Settings > Siri & Search and toggle Press Home for Siri on. Once activated, there are a few ways you can activate Siri.

- Press and hold the Home or Side button.

- Go to Settings > Siri & Search and enable Listen for “Hey Siri”. Once done, you can activate Siri by saying “Hey Siri” out loud.

- If you’re using AirPods, double-tap the AirPods Pro or AirPods 2nd-gen to activate Siri (this can also be customized in settings). If you have the AirPods Max, hold down on the Digital Crown until you hear a chime.

- If you have an Apple Watch, you can activate Siri by double-tapping the button below the crown.

- If you’re using a Mac, click on the Siri icon in the menu bar.

Commands for calling, voicemail, messages, and email

- “Call [name or number].”

- “Facetime [name or number].”

- If you want to start a call on speakerphone, you can say “Call [name or number] on speaker.”

- “Check my voicemail” or “Check voicemails from [name].”

- “Check my messages” or “Check my email.”

- “Read my new messages” or “Read my new email.”

- “Send an email to [name].” Siri will then ask you to provide the subject and message. You can also say the entire command at once like this: “Send an email to [name], about [what you say here will be the subject], and say [what you say here will be the message].”

Commands to control your device’s setting

- “Turn on/off [Wi-Fi, Bluetooth, cellular data, Do Not Disturb, Night Shift, or Airplane Mode].”

- “Take a picture/selfie.”

- “Increase/decrease brightness.”

- When playing music, you can say, “Turn up/down the volume.”

- Open [app or game].

Commands for currency conversions, calculations, and timers

- “What is [say the amount and name of currency] into [say the name of the currency].”

- You can also convert any other measurements. For example: “What are 335 meters into feet?”

- “What is [number] plus/minus/divided/multiplied by [number]” or “What is the square root of [number].”

- “Set a timer for [say the amount of time].”

- “Set an alarm for [time].”

- To calculate a tip, you can say “What is [percentage] of [number]?”

- “What is [say the name of the company] stock price?”

- “What is the weather like today?” or “What is the weather like in [say the country or city].”

- “What is the time in [say the country or city].”

Commands for setting appointments, alarms, lists, and reminders

- “Check my meetings.”

- “Schedule a meeting with [name] at [day and time].”

- “Remind me to [say what to remind you about] at [say when you want Siri to remind you].” You can also add a location to your reminder. For example: “Remind me to call John when I get home.”

- If you need to know what day falls on a certain date, you can ask “What day is December 25?” Alternatively, you can say “How many days until December 25?”

- “Make a list called [name of list].”

- “Add items to the list [name of list].”

Navigating and travel commands

- “Where am I?” or “What’s my location?”

- “Show me the nearest [gas stations/type of restaurants/malls/etc.].”

- “How do I get to [destination] by [car/foot/bike].”

- “Take me home.”

- “What are the traffic conditions” or “What are the traffic conditions near [name location].”

- “How long until we arrive at [destination].”

- “Check flight status of [airline and flight number].”

- “Show me the bus route to [destination].”

- “Do I need an umbrella?”

Search commands

- “Open [name of app].”

- “Define [word].”

- “Search the App Store for [name of app or category of apps].”

- Ask any question and Siri will search the internet. For example: “How do you cook an omelet?”

- “Find notes/email about [say some keyword related to what you’re searching for].”

- “Find friends near me” or “Where is [name of friend].”

- Find photos by saying, “Show me photos from [date] or of [name — if you have assigned names in the People album].”

- “Find/download the [name of artist] podcast.”

Entertainment, sports, and music commands

- “Show me the [sports team or game] scores.”

- “Play music,” or while listening to a song, you can tell Siri to “pause/stop/skip” or “play the next/previous song.”

- “Play [name of song].”

- “Like this song.”

- “What song is this?”

- “What is this song?”

- “Play more like this.”

- “Play songs from [group or artist].”

- “Play music for cardio/studying/bedtime (and other activities or moods).”

- “Play top songs from [year].”

- “What movies are playing near me?” or “What are the movie showtimes for [name of movie/name of cinema].”

- “Play a game” or “Games” or “Show me games” or “Show me driving/platformer/Mario games.”

Using Siri with your HomePod and Intercom

If you are playing music via an iPhone on your HomePod, then Siri’s music commands listed above will also be useful. However, there are a few additional playback commands you should keep in mind, including:

- “Turn the volume up 65%.” (Specific percentages work on the HomePod but may not work on other Bluetooth speakers)

However, the HomePod also makes it particularly easy to use the Intercom function, which will broadcast a message to every compatible speaker and iOS/WatchOS device in your group, including AirPods. To activate it, simply say, “Hey Siri” and then something like:

- “Intercom, Dinner is ready in 5 minutes.”

- “Ask everyone, Has anyone seen my new coat?”

- “Announce upstairs, Don’t forget to clean your room.”

- “Ask the kitchen, Do we have any bananas left?”

You can set up Intercom settings in the Home app and change them whenever you want, including what devices the messages will be broadcast to. It’s important to fully identify speakers and where they are to get the most usability out of this feature. If you receive an Intercom message, you can easily send a message back with the command:

- “Reply, your coat is in the laundry room.

- “Reply to the kitchen, “I’ll be down in a few minutes”.”

Using Siri with HomeKit

If you have HomeKit compatible smart devices, this opens up the possibility of many more Siri commands directed on your iPhone or iPad, which can operate those devices or changing their settings. The Home app will also encourage you to link compatible smart devices together for more complex, automated smart home scenarios, which can make it easy to develop shortcuts for your daily life. Your options include commands like:

- “Set the temperature to 72 degrees.” (With compatible smart thermostats)

- “Turn the living room lights red.” (With compatible smart bulbs)

- “Is the garage door open?” (With compatible smart garage door add-ons)

- “Is the front door locked?/Unlock the front door.” (With compatible smart locks)

- “Dim the bedroom lights.”

- “Turn on the heater.”

- “I’m home.” (Initiates smart home scene.)

- “Good night.”

Using third-party apps

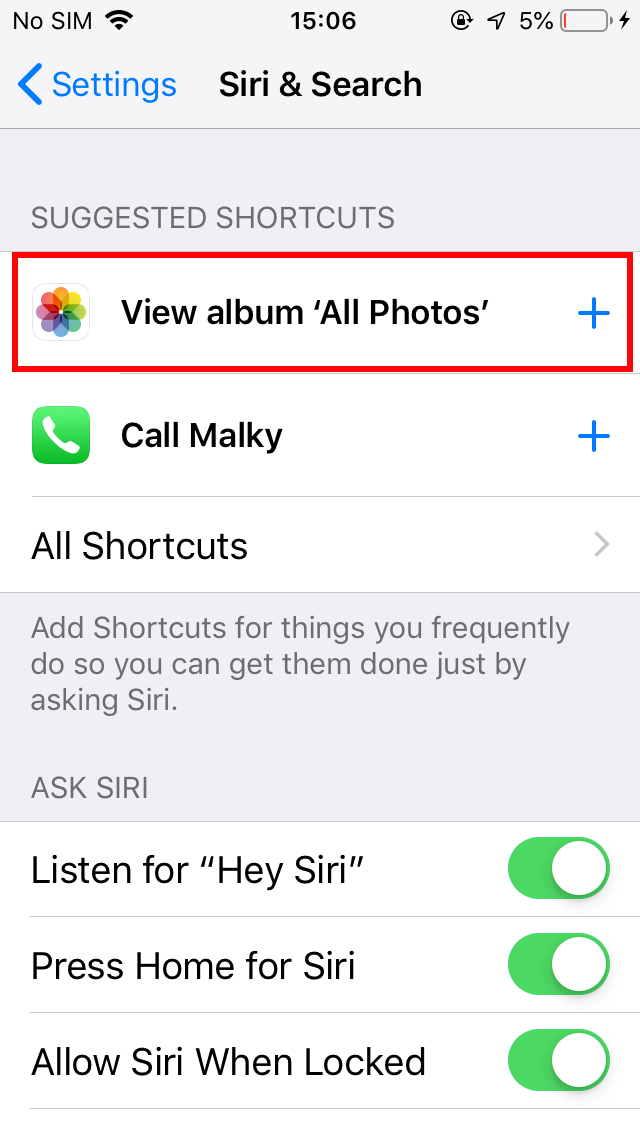

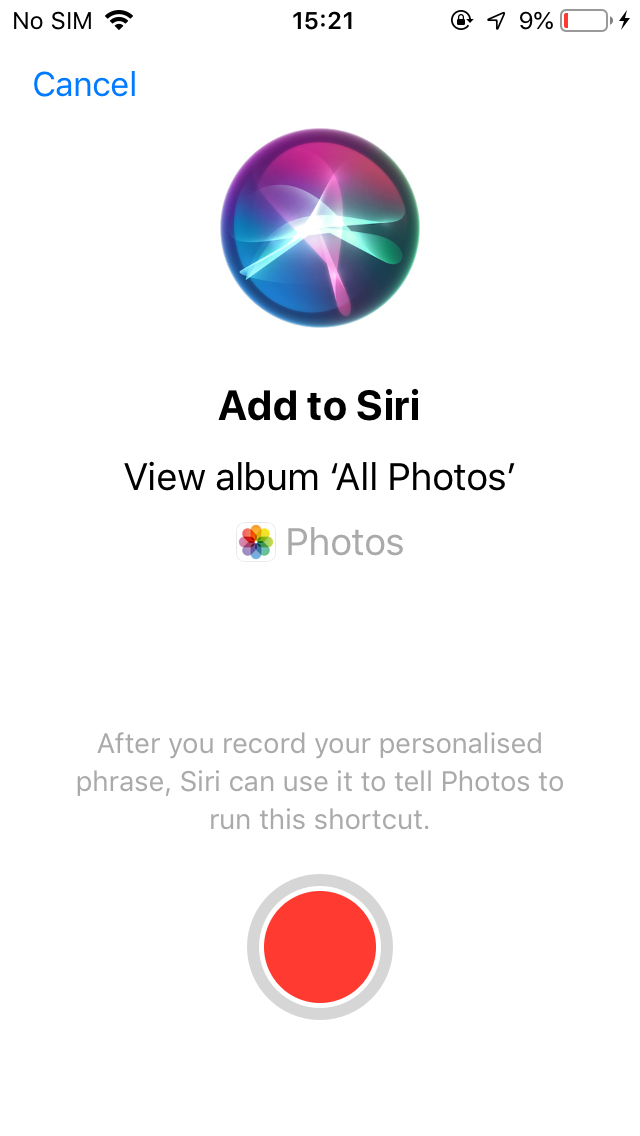

Apart from the ability to open apps, Siri can also perform actions or jump deep into specific apps to bring you the information you want, when you want it. Siri will monitor how you use apps and suggest Siri Shortcuts — a handy way to get things done using the apps you use most often on your phone. You’ll find these suggestions in Settings > Siri & Search. You can add shortcuts by recording a phrase that Siri will identify and activate the shortcut. For example, you might say “Order the usual” to trigger a particular food order in the Caviar app or “Take the scenic route” to avoid traffic in the Waze app. You can even make up shortcuts that can combine custom actions in multiple apps. There are so many different ways to use Siri Shortcuts that you’ll be amazed at how helpful your phone can be.

Apple has not rolled out an official list of apps that utilize custom Siri Shortcuts. However, this Reddit post could serve as a reference list for supported apps, like Amazon Kindle, Adobe Lightroom, and Pandora. It’s a pretty thorough list; it also provides tips for optimizing Shortcuts with each of its listed apps too. For example, it explains how you can use certain specific commands as a shortcut for your device, including the following:

- “Send a [name of messaging app] message to [name of contact].”

- “Get me a Lyft to [name of destination].”

- “Post a message to [Facebook/Twitter].”

- “Pay [name] $20 with [PayPal/Square].”

Fun stuff

Siri helps streamline your overall phone experience. It serves as your own digital concierge or assistant oftentimes. Siri can pull up directions, set timers, call others, and so much more. On top of the device’s practicality, it can also be fun to play around with. If you know of special features, like Siri’s “magic eight balls” function, you can play around with the device for some time. Or you can expect Siri to surprise you with its response to silly questions or commands like “flip a coin.”

There are many funny questions you can ask Siri, and Apple often adds new Siri replies or updates older ones. Your voice assistant may surprise you with responses that leave a smile on your face. And if you’ve never tried asking a silly or existential question of your Siri, start by asking what her favorite color is, what she dreams about, or whether or not she’ll go on a date with you.

Editors' Recommendations

- The best tablets in 2024: top 11 tablets you can buy now

- The 10 best photo editing apps for Android and iOS in 2024

- Your iPhone just got a new iOS update, and you should download it right now

- Apple just released iOS 17.4. Here’s how it’s going to change your iPhone

- The best iPhone 14 Pro cases: 20 best ones in 2024