(in)Secure is a weekly column that dives into the rapidly escalating topic of cyber security.

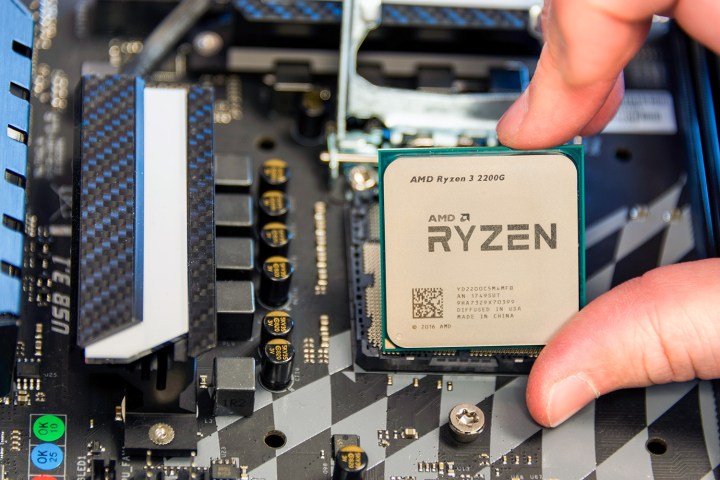

On Tuesday, March 13, security firm CTS Labs announced the discovery of 13 flaws in AMD’s Ryzen and Epyc processors. The issues span four classes of vulnerabilities that include several major issues, such as a hardware backdoor into Ryzen’s chipset, and flaws that can completely compromise AMD’s Secure Processor, a chip that’s supposed to act as a “secure world” where sensitive tasks can be kept out of malware’s reach.

The lack of agreement means there’s no way to know when the next flaw will be exposed, who it will come from, or how it will be reported.

This revelation comes just months after the reveal of the Meltdown and Spectre flaws that impacted chips from AMD, Intel, Qualcomm, and others. AMD, whose chips were compromised by some Spectre flaws, came out of the fiasco relatively unscathed. Enthusiasts focused their anger on Intel. Though a handful of class-action lawsuits were filed against AMD, they’re nothing compared to the hoard of lawyers set against Intel. Compared to Intel, AMD seemed the smart, safe choice.

That made Tuesday’s announcement of flaws in AMD hardware even more explosive. Twitter-storms erupted as security researchers and PC enthusiasts argued over the validity of the findings. Still, the information provided by CTS Labs was independently verified by another firm, Trail of Bits, founded in 2012. The severity of the issues can be argued, but they do exist, and they compromise what some PC users had come to view as the last safe harbor.

The wild west of disclosure

The content of CTS Labs’ research would’ve generated headlines in any event, but the reveal’s punch was amplified by its surprise. AMD was apparently given less than 24 hours to response before CTS Labs went public, and CTS Labs has not gone public with all technical details, instead choosing to share them only with AMD, Microsoft, HP, Dell, and several other large companies.

Many security researchers cried foul. Most flaws are disclosed to companies earlier, alongside a timeframe to respond. Meltdown and Spectre, for instance, was disclosed to Intel, AMD, and ARM on June 1 of 2017 by Google’s Project Zero team. An initial 90-day window to fix the problems was later extended to 180 days, but ended ahead of schedule when The Register published its initial story on Intel’s processor flaw. CTS Labs’ decision not to offer prior disclosure has caused speculation that it had another, more malicious motive.

CTS Labs defended itself in a letter from Ilia Luk-Zilberman, the company’s CTO, published on the AMDflaws.com website. Luk-Zilberman takes issue with concept of prior disclosure, saying “it’s up to the vendor if it wants to alert the customers that there is a problem.” That’s why you rarely hear of a security flaw until months after it was uncovered.

Worse, says Luk-Zilberman, it forces a game of brinkmanship between the researcher and the company. The company might not respond. If that happens, the researcher faces a grim choice; keep quiet and hope no one else finds the flaw, or go public with the details of a flaw that has no available patch. Cooperation is the goal, but the stakes for both researcher and company encourage defensiveness. The question of what’s proper, professional, and ethical often collapses into petty tribalism.

Where’s the bottom?

The industry standard for disclosing a flaw doesn’t exist and, in its absence, chaos reigns. Even those who believe in disclosure don’t agree on details, such as how long a company should be given to respond. The lack of agreement means there’s no way to know when the next major flaw will be exposed, who it will come from, or how it will be reported.

It’s like strapping on a life vest as a ship sinks into frigid waters. Sure, the vest is a good idea, but it’s not enough to save you anymore.

Cyber security is a mess, and it’s a mess that’s taken its toll on each of us. While alarming, the new flaws in AMD processors — like Meltdown, Spectre, Heartbleed, and so many others before — will be soon be forgotten. They must be forgotten.

After all, what other choice do we have? Computers and smartphones have become mandatory for participation in modern society. Even those who don’t own them must use services that rely on them.

Every piece of software and hardware we use is, apparently, riddled with critical flaws. Even so, unless you decide to abandon society and build a cabin in the woods, you must use them.

Normally, I’d like this column to end on practical advice. Use strong passwords. Don’t click on links that promise free iPads. That sort of thing. Such advice remains true, but it feels like strapping on a life vest as a ship sinks in frigid arctic waters. Sure. The life vest is a good idea. You’re safer with it than without — but it’s not enough to save you anymore.

Editors' Recommendations

- Don’t wait for new GPUs. It’s safe to buy a gaming laptop now

- AMD Ryzen Master has a bug that can let someone take full control of your PC

- AMD just leaked four of its own upcoming Ryzen 7000 CPUs

- AMD just won the core wars, and it still has a trump card up its sleeve

- WPA3, the third generation of Wi-Fi security, has one giant flaw: You