An API is essentially a bridge between a service and an application. In this case, the API connects to the Google Cloud Machine Learning platform for the compute aspect and stores annotated videos on Google Cloud Storage. Thus, due to this “bridge,” an application based on Google’s new API will have access to this specific functionality to provide end-users with a better way of searching through videos.

“You can now search every moment of every video file in your catalog and find every occurrence as well as its significance,” Google states. “It helps you identify key nouns entities of your video, and when they occur within the video. Separate signal from noise, by retrieving relevant information at the video, shot or per frame.”

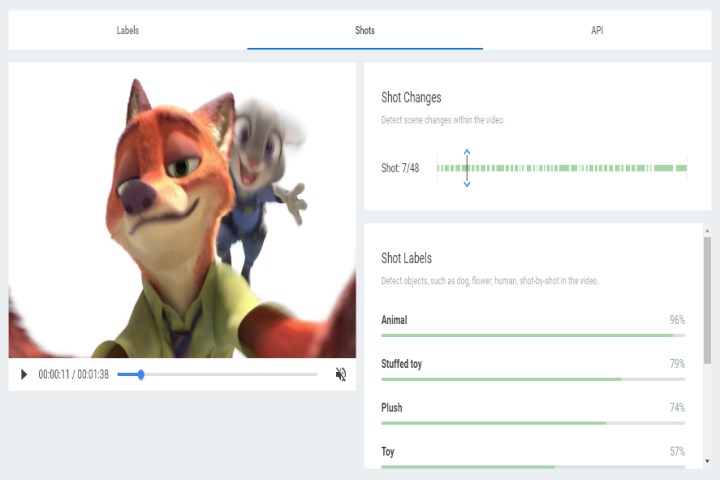

In a demo, users can search for animals in an MP4 video file lasting just over a minute and a half. The labels generated by Cloud Video Intelligence consist of Animal (99 percent), Wildlife (94 percent), Zoo (91 percent), Terrestrial Animal (54 percent), Nature (51 percent), Tourism (47 percent), and Tourist Destination (43 percent). The sample video focuses on the Los Angeles Zoo presented by Disney’s Zootopia CGI-animated movie.

However, what’s really neat about the new API is how it can detect a scene in a video. In the same clip, Cloud Video Intelligence can detect 48 scene changes and in real time detect and label objects as the scenes change. For instance, in one scene that displays just Nick the fox, the API will generate seven labels. In another scene focusing on the zoo’s sign, the system only generates two labels … again, all in real time.

What Google has done is create a tool that enables users to search through a video catalog just like they would with text documents. According to the company, this will be highly useful for businesses to separate signals that are buried under noise. It can also “detect features of a signal providing only relevant entities at video, shot or frame level.”

“Google has a long history working with the largest media companies in the world, and we help them find value from unstructured data like video,” said Fei-Fei Li, Chief Scientist of Google Cloud AI and Machine Learning. “This API is for large media organizations and consumer technology companies, who want to build their media catalogs or find easy ways to manage crowd-sourced content.”

The new API is now in a private beta and will also be offered to Google’s partners such as Cantemo, which will use the API to connect its video management software to the Google Cloud Machine Learning platform.