Desktop computing has long been considered the golden standard for gaming — the “PC Master Race” being a cornerstone meme of the industry. However, the new consoles have not only closed the performance gap in some extraordinary ways, they now have features that make PC gaming feel antiquated by comparison.

As someone that has had a complicated history with playing games on desktop, I’m here to tell you: The Xbox Series X and PlayStation 5 are worth a considerable amount more of your time and money, and there’s no need to buy a PC if your only interest is to play video games.

The new consoles achieve the best aspects of the PC

There are a few reasons that you might want a PC to play video games, and the consoles achieve nearly all of them.

One of the simplest is that both new console systems support mouse and keyboard for a large swath of their games, although advancements like Sony’s DualSense make the input feel incredibly boring, now more than ever.

Another primary rationale is that games on PC aspire to a next-level graphical fidelity that consoles could only dream to shoot for. For decades this had been the case, with new consoles already feeling dated in the eyes of pixel peepers the moment they’re launched.

That’s not the case anymore. Consoles have slowly been playing catchup, and with Xbox Series X and PS5, they’re on a level of near graphical parity to PCs that no average person could glean a difference. Both new systems make 4K a standard, and often times, are able to achieve that resolution at 60 frames per second.

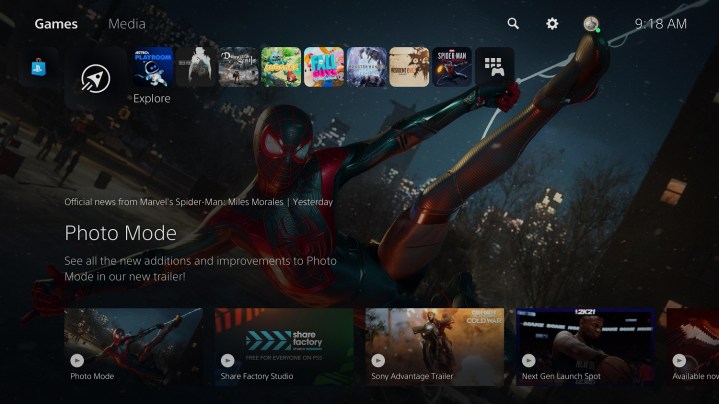

Consoles even allow for graphical customizations like PCs. Spider-Man: Miles Morales allows the player to toggle between a frame rate targeted performance mode and a fidelity mode that favors resolution. The former allows Miles Morales to run at 4K60 and the latter brings that frame rate down to 30 fps but adds in the incredible visual tweak of ray-tracing — injecting true-to-life lighting and reflections to every frame.

On the PS4 Pro, I rarely played games in their performance mode — I often found the drop in resolution to be too steep for the increase in frame rate. In the case of Miles Morales, the resolution is identical in both modes, and while playing in fidelity mode, there are times my jaw drops when I catch neon reflections in pools of water. The higher fps performance mode provides a level of fluidity to the motion that Spider-Man deserves.

Other games take this graphical prowess even further. Call of Duty: Black Ops Cold War runs at 4K60 minimum with ray-tracing turned on, and with it off, it can achieve 120fps, something last generation’s mid-cycle refresh couldn’t even do at 1080p.

Demon’s Souls uses an upscaler to allow its performance mode to run at “fauxK” and 60 fps, and upscaling technology has gotten so good, I can only tell the difference between it and native 4K by actively hunting for it in the frame and going, “well I guess that rock is slightly fuzzier.”

And that’s the thing about PC gaming: Its future isn’t as much about hardware improvements as it is about the software. It’s becoming increasingly clear that technology like DLSS, which uses machine learning to create “fake” resolutions that look as good if not even better than their native counterparts, is how video games are going to achieve 8K, and eventually 16K, performance. These technologies are going to allow the new consoles to do things that were previously impossible.

Consoles don’t fail in the same way PCs do

That brings us to another aspect of PC gaming, which is hardware customization. I’ve owned a couple of PC rigs over the years, and have frequently found myself sliding back into the comfort of console gaming. One of the reasons that keeps being touted to me as to why I should stick with a desktop is the ability to customize it over time, but this argument just rings hollow now.

Nvidia’s lowest-end card, the GeForce RTX 3070, is the same price as the Xbox Series X and PlayStation 5, and it’s just the graphics card. Often, in order to upgrade one PC part, you are required to tweak other elements of the rig. Perhaps a new CPU to unlock the true power of the GPU, or a beefier power supply to run the whole thing.

It makes less sense than ever before to see this as an upgrade. Even if in a few years we see another mid-cycle refresh and I feel compelled to buy a PS5 Pro, I will likely have spent less than half on it plus my initial

The other thing about PC gaming is that computers are just disastrously less efficient than consoles, especially the new systems, in almost every way. Not only in power consumption, but in the moment to moment experience. Booting up my PS5 from a complete shut down to being in a matchmaking lobby for Black Ops Cold War takes a matter of seconds.

Doing the same action on the PC can take over a minute, which also doesn’t have the luxury of downloading updates while in a rest mode, so heaven forbid another 30GB patch drops when my friends ask me to hop on immediately.

This jilted performance extends to the rest of the entire PC experience. Call of Duty: Modern Warfare frequently had performance issues where it would run at a nightmarish 20 to 30 fps, even at 1080p. On the latest Intel I7 CPU and before RTX 2080 Super GPU? It’s offensive.

This would result in me scouring the internet for sporadic forum posts that seemed to match my issue, often with “solutions” that provided no relief that required me to shadily jump into a random file deep within the game and adjust some digits like I’m Tank from The Matrix.

Two of the biggest releases of the year, Watch Dogs: Legion and Assassin’s Creed Valhalla, more often than not refused to even run on my machine, often crashing unless I tweaked the graphics settings just right. This probably stems from Ubisoft Connect, the software formerly known as UPlay, being terribly optimized for PC, an issue that has never been resolved according to the years and years of complaints I’ve found on forums.

The PC experience may offer some incredible highs, but if often achieves lows that the consistency and streamlined nature of consoles rarely, if ever, stoop to.

I’m not saying don’t buy a PC

If using a PC sucks, why even bother? Well, the thing about computers is that they offer an almost infinite number of other uses compared to consoles. Whereas the PS5 is there for me to play games and watch Netflix, I use my computer to write articles such as this. I use it to edit videos. I use it to browse the web. I use it to livestream. And yes, on occasion, I use it to game.

If you make music, edit videos, create digital art, etc., there’s a huge incentive to own a powerful computer outside of gaming, and in these cases, purchasing a desktop or laptop not only makes sense, but is a necessity.

If this is you, by all means, invest in a machine that can help you in these areas that you also use to play games. But if you’re someone that is simply looking to purchase a high powered rig to game, take it from someone that owns one and the new consoles: There’s no question that the latter is the better investment 10 times out of 10, and I don’t see that changing anytime soon.

Editors' Recommendations

- Every rumored video game console: Nintendo Switch 2, PS5 Pro and more

- Fallout 4 is finally getting free Xbox Series X and PS5 upgrades

- Mecha Break’s robot customization shakes up the battle royale formula

- Another Crab’s Treasure is an approachable Soulslike with a comedic twist

- Every key detail from Xbox’s business update: new console, multiplatform games, and more