- Home

- Emerging Tech

Emerging Tech

About

How to buy Bitcoin

In this guide, we teach you how to buy Bitcoin for the first time, from finding the right wallets and exchanges to spending Bitcoin in a smart, efficient way.

Digital Trends’ Tech For Change CES 2023 Awards

Of all the world-changing technologies and innovative ideas on display at CES 2023, these ones impressed us the most.

Digital Trends’ Top Tech of CES 2023 Awards

From dual-screen laptops to smarter smart rings, the top tech of CES 2023 took existing technologies and made them practical enough to finally buy. Here are our favorites.

This AI cloned my voice using just three minutes of audio

My Own Voice certainly isn't the only voice-cloning tool out there, but what's impressive about it is that it only needs a tiny amount of input.

CES 2023: HD Hyundai’s Avikus is an A.I. for autonomous boat and marine navigation

At CES 2023, HD Hyundai Avikus is making an appearance. It's an A.I. and autonomous navigation solution for commercial and recreational boats. Learn more here.

Meet the game-changing pitching robot that can perfectly mimic any human throw

Trajekt's high-tech pitching machine can mimic the exact speed, spin, and trajectory of any human pitch. It's a literal game-changer for professional baseball

Don’t buy the Meta Quest Pro for gaming. It’s a metaverse headset first

Meta has made it clear that the Quest Pro isn't a device that casual video game fans need to pick up.

The best hurricane trackers for Android and iOS in 2022

There are plenty of hurricane trackers that help you prepare for, monitor, and weather a major storm. We've assembled a list of the best apps to keep you safe.

Why AI will never rule the world

Despite fears that AI will one day take over the world, some experts contend that our existing AI systems are incapable of surpassing human intelligence

The next big thing in science is already in your pocket

By borrowing computing power from millions of smartphones and home PCs, researchers can crack big scientific problems faster than ever before.

Meta wants to supercharge Wikipedia with an AI upgrade

Meta, the company formerly known as Facebook, has proposed a surprisingly simple way to make Wikipedia articles more transparent and trustworthy

Optical illusions could help us build the next generation of AI

Computer vision algorithms can't see optical illusions as we humans can -- and scientists are using that quirk to their advantage

This Segway electric scooter is $170 off in Best Buy’s Prime Day sale

Best Buy has a great Prime Day deal taking place, as you can get the Segway Ninebot KickScooter Max for just $830 while Prime Day deals last.

Best Prime Day Drone Deals 2022: What to expect this week

Prime Day is close, and we're checking out what to expect from the Prime Day drone deals happening this week.

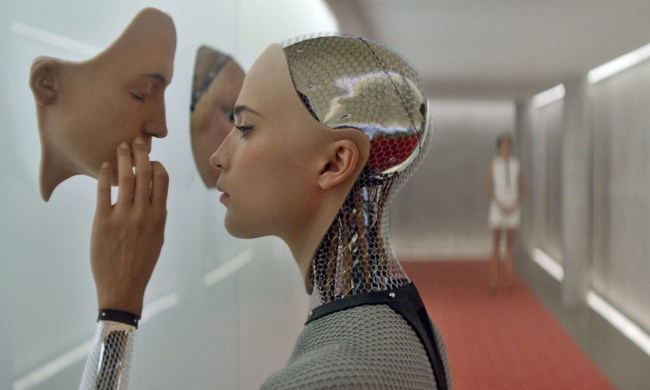

How will we know when an AI actually becomes sentient?

Many scientists and technologists believe AI will eventually become sentient. But how will we know when that happens? Currently, there's no good way to tell.

Dyson lifts lid on ‘top secret’ project

Electronics firm Dyson has revealed it's planning to take on hundreds of engineers for a major new robotics-based project.

Implantable payment chips: The future, or cyberpunk pipe dream?

Implantable payment chips are here -- enabling users to pay for things with a wave of their hand. Is this the wave of the future, or merely a flash in the pan?

Tiger steaks and lion burgers: Lab-grown exotic animal meat is on the way

Most cellular meat startups focus on growing familiar foods (like beef and chicken) in a lab, but Primeval Foods has its sights set on something more exotic.

Finishing touch: How scientists are giving robots humanlike tactile senses

Giving robots sight and hearing is fairly straightforward these days, but equipping them with a robust sense of touch is far more difficult.

Analog A.I.? It sounds crazy, but it might be the future

Digital transistors have been the backbone of computing for much of the modern era, but Mythic A.I. makes a compelling case that analog chips may be the future.

The most influential women in tech history

With their big ideas and clever inventions, these inspirational women left an indelible mark on the world and set us on a path to a brighter future.

10 female inventors who changed the world forever

The women on this list have risen above inequality, creating products and services that have truly changed the world.

Why the moon needs a space traffic control system

As interest in lunar exploration ramps up across the globe, scientists think we need a space traffic control system to avoid collisions and complications.