- Home

- Computing

Computing

About

News, reviews, and discussion about desktop PCs, laptops, and everything else in the world of computing, including in-depth buying guides and daily videos.

How to change margins in Google Docs

How to delete files on a Chromebook

Amazon deals: TVs, laptops, headphones and more

The best laptop brands for 2024

How to delete messages on your Mac

Why Llama 3 is changing everything in the world of AI

How to delete or hide chats in Microsoft Teams

How to customize mouse gestures on Mac

How to delete a Discord server on desktop and mobile

The Asus ROG Ally just got a game-changing update

How to easily connect any laptop to a TV

This laptop beats the MacBook Air in every way but one

This ultra-portable Lenovo 2-in-1 laptop is discounted from $649 to $199

How to connect a keyboard and mouse to the Steam Deck

This HP laptop is discounted from $519 to $279

How to choose the best RAM for your PC in 2024

The next big Windows 11 update has a new hardware requirement

Squarespace free trial: Build and host your website for free

The best web browsers for 2024

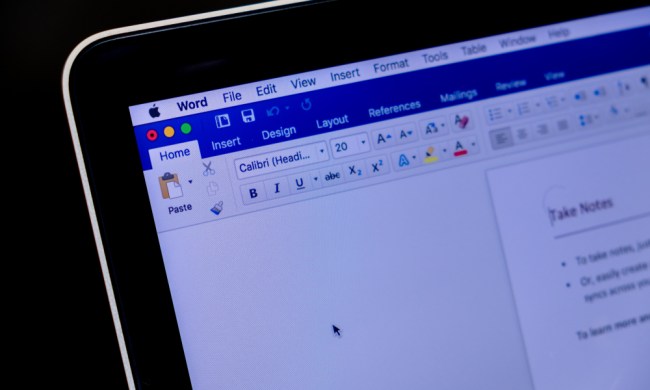

Microsoft Word free trial: Get a month of service for free

Best Squarespace deals: Save on domains, web builder, and more

Save $150 on a lifetime license for Microsoft Office for PC

You’re going to hate the latest change to Windows 11

Computing News

Laptops

Computing Reviews

Nvidia

You may find that Google Docs has a UI that is almost too clean. It can be difficult to find basic things you're used to, such as margin settings. Don't worry, though, you can change margins in Google Docs just like with any other word processor through a couple of different means.

(function() { const el = document.getElementById('h-662b5f1817023'); const list = el.querySelector('.b-meta-table__list'); const listModifier = 'b-meta-table__list--long'; const moreItems = (el.querySelectorAll('.b-meta-table__list-item')).length - 4; const btn = el.querySelector('.b-meta-table__button'); const additionalBtnClass = 'b-meta-table__button--active'; if (btn) { btn.addEventListener('click', function(e) { if (list.classList.contains(listModifier)) { list.classList.remove(listModifier); btn.classList.add(additionalBtnClass); btn.innerHTML = JSON.parse(decodeURIComponent('%22Show%20less%22')); } else { btn.innerHTML = moreItems === 1 ? 'Show 1 more item' : 'Show ' + moreItems + ' more items'; btn.classList.remove(additionalBtnClass); list.classList.add(listModifier); } }); } })();If you have an exact margin measurement in mind, we recommend trying the Page Setup method first. On the other hand, if you want more visual and stylistic control (or want to control your indents, too) going directly to the ruler on the page is more advisable.

Using Page Setup

This is the easiest way to change margins in Google Docs, because using the Page Setup option pretty much automates the adjustments for you.

Step 1: Open your desired Google Docs file or create a new one.

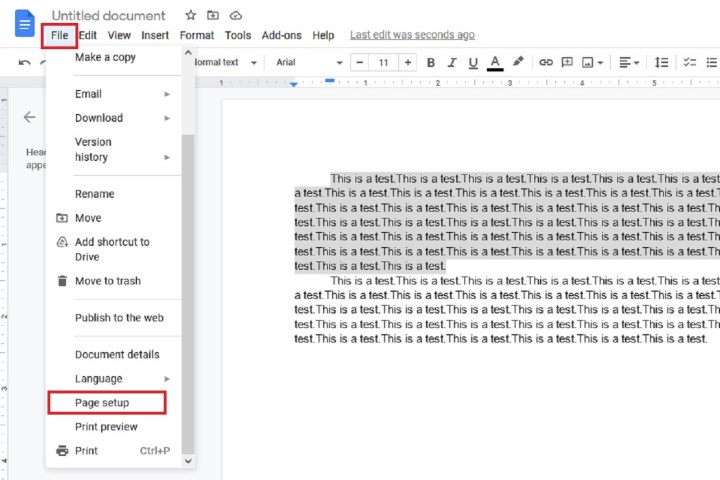

Step 2: If you only need to change the margins for a specific portion of text, then select the paragraph or lines and then click File, located in the top-left corner.

If you want to apply margin changes to the whole document, just click on File.

Step 3: From the File drop-down menu, select Page Setup. You may need to scroll down to see this option.

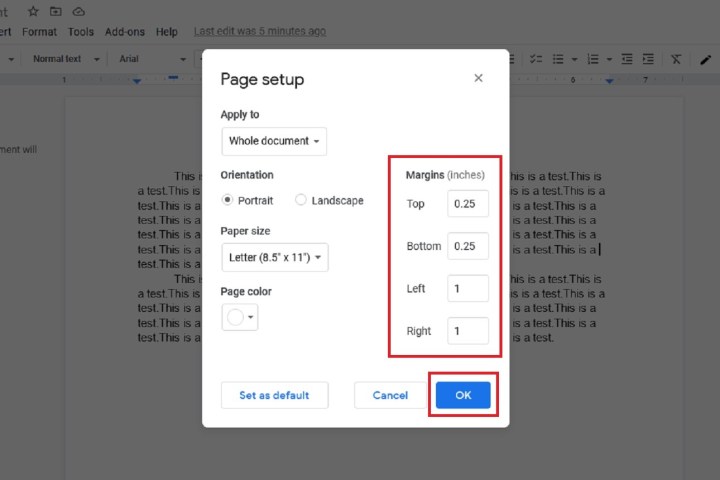

Step 4: The Page Setup dialog box will appear. Under the section labeled Margins are four little text boxes in which you can input your desired measurement of each margin — in inches, for all four sides of the document: Top, Bottom, Left, and Right.

Step 5: After you've added your desired measurements, click OK to save your changes. The margins in your document should automatically adjust to your specified measurements.

Using the ruler

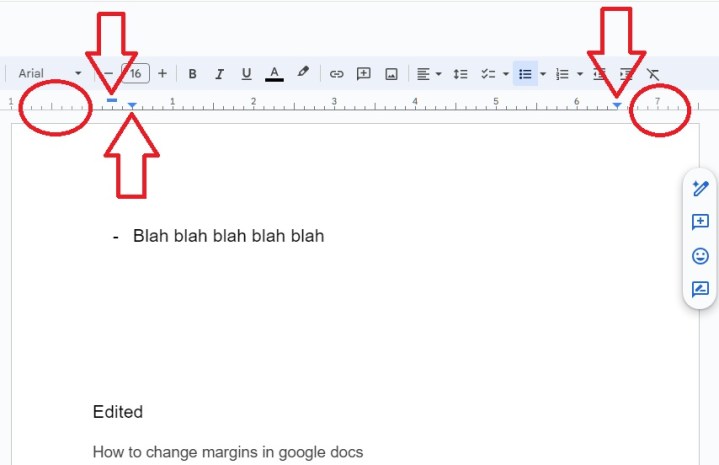

If you want just a bit more control over each individual margin, you can use the ruler that surround your document. It has five key zones:

- The left-most zone, indicated by the circle, sets the left indent. Click and drag this to move your left indent.

- The blue bar sets the first line indent.

- The left-most blue arrow will set the left indent for further indents, such as in bulleted lists. This will often be directly below the blue bar.

- The right-most arrow sets the start for text when you align right.

- If you click to the right of the arrow, you can set the right margin in much the same way as you can set the left margin.

You can also click on the ruler between the blue arrows to set tab stops, allowing you more control over where the cursor lands when you press Tab.